How Do You Generate Game-Ready Tactical Hero-Shooter 3D Props And Characters From Images Faster?

You generate game-ready tactical hero-shooter 3D props and characters from images faster by inputting reference photographs into AI-powered reconstruction platforms such as Threedium, Luma AI, or RealityCapture that automatically transform 2D visual data into optimized 3D meshes with PBR (Physically-Based Rendering) textures and materials. This image-to-3D approach accelerates asset production by eliminating manual polygon-by-polygon modeling, delivering both visual accuracy and runtime performance for competitive multiplayer titles such as Valorant, Counter-Strike 2, and Overwatch.

Tactical hero-shooters like Valorant (developed by Riot Games) and Counter-Strike 2 (developed by Valve Corporation) require extensive asset libraries comprising unique weaponry, character gear, and environmental props that maintain consistent visual quality across diverse gameplay scenarios. Traditional 3D modeling workflows consume 8-16 hours per individual asset when 3D artists manually construct geometry, unwrap UVs (UV texture coordinates), and author textures. Image-to-3D pipelines reduce the asset production timeline to 2-4 hours by automating geometric reconstruction and texture generation, enabling technical artists to focus on game-specific optimization tasks such as collision mesh creation and shader assignment.

Key Technologies for Image-to-3D Conversion

Photogrammetry

Photogrammetry (a 3D scanning technique) converts physical objects to digital format by photographing objects through synchronized photography from multiple viewpoints under controlled lighting environments. The process involves:

- Capturing real tactical equipment from 50-200 photographic angles

- Maintaining 60-80% frame overlap between consecutive shots

- Using software like RealityCapture and Agisoft Metashape

- Generating dense point clouds with millions of spatial coordinates

| Photogrammetry Specifications | Details |

|---|---|

| Camera Positions | 50-200 angles around object |

| Frame Overlap | 60-80% between shots |

| Output Format | Dense point clouds → High-resolution meshes |

| Surface Detail | Microscopic characteristics (wear, texture) |

Photogrammetry captures with high fidelity microscopic surface characteristics needed for tactical gear authenticity: weapon wear patterns, fabric weave structures, metal oxidation, and grip texturing.

Neural Radiance Fields (NeRF)

Neural Radiance Fields (introduced in 2020 by Mildenhall et al.) generate three-dimensional scenes through deep learning models optimized for modeling light transport and volumetric rendering:

- Users provide 20-40 photographs of character equipment or props

- Platforms like Luma AI compute light ray interactions with surfaces

- NeRF networks compress scene geometry into neural representations

- Outputs are transformed into polygon meshes for real-time engines

Advantages of NeRF: - Requires fewer input images than photogrammetry - Captures complex materials like translucent fabrics and reflective armor plating - Supports novel viewpoint rendering

3D Gaussian Splatting

3D Gaussian Splatting (introduced in 2023) encodes scenes as millions of oriented 3D Gaussian primitives that support real-time rendering at 60+ FPS during asset preview:

- Each Gaussian primitive stores: position, color, opacity, and covariance data

- Enables immediate visualization of spatial relationships

- Used for determining cover positions and sightline analysis

- Supports environmental storytelling during preproduction

Single-Image Reconstruction

Single-image reconstruction produces 3D geometry from individual concept art pieces using AI models trained on extensive 3D asset databases:

- Extracts depth information and surface normals from visual cues

- Generates base meshes for rapid iteration

- Reduces early-stage prototyping from days to hours

- Requires topology optimization and detail sculpting

Production Pipeline Optimization

Automated Retopology

Raw photogrammetry captures produce meshes containing 2-10 million triangles, exceeding performance budgets for real-time tactical shooters:

| Asset Type | Target Triangle Count |

|---|---|

| Hero Weapon | 5,000-15,000 triangles |

| Playable Character | 8,000-25,000 triangles |

| Background Props | 500-2,000 triangles |

Automated retopology tools like Quad Remesher decrease polygon counts by 95-99% while maintaining: - Silhouette accuracy - Edge sharpness for weapon readability - Optimized edge loops for animation

Normal Map Baking

Normal map baking projects high-resolution surface detail to optimized low-poly geometry:

- Encodes geometric information into RGB texture channels

- Preserves visual complexity within performance constraints

- Hero weapons use 2048×2048 pixel detail maps

- Background props use 512×512 resolution

PBR Material Workflows

Physically-Based Rendering workflows generate texture maps from photogrammetry data:

- Albedo maps: Pure surface color without lighting

- Metalness maps: Define conductive versus dielectric materials

- Roughness maps: Control microfacet surface scattering

- Ambient occlusion maps: Encode geometric shadowing

Metalness differentiation is critical for tactical gear rendering, where reflective metal buckles, weapon receivers, and armor plates should contrast with diffuse fabrics under dynamic lighting.

Level of Detail (LOD) Generation

LOD systems produce multiple mesh variants with progressively reduced polygon counts:

| LOD Level | Polygon Density | Usage |

|---|---|---|

| LOD0 | 100% | First-person viewmodels |

| LOD1 | 60% | Nearby third-person characters |

| LOD2 | 30% | Mid-range visibility |

| LOD3 | 10% | Distant silhouettes |

UV Unwrapping and Material Optimization

UV unwrapping optimization focuses on: - Reducing seam placement along visible edges - Equalizing texel density across surface regions - Adding padding between UV islands to prevent mipmap bleeding - Organizing components by material type

Collision mesh generation reduces geometry into: - Convex hulls or primitive shapes (boxes, capsules, spheres) - 50-200 triangles for weapons versus 10,000+ visual triangles - Maintains gameplay-critical hit detection accuracy

Export and Validation

File Format Support

Export processes encode assets into engine-specific formats:

- FBX containers: Package geometry, materials, and skeletal data

- GLTF packages: Support web-based applications

- Texture compression: BC7 for PC, ASTC for mobile

Quality Assurance

Import validation scripts automatically check for: - Non-manifold geometry that causes rendering artifacts - Normal map orientation matching engine conventions - Material slot assignments preventing texture errors - Skeleton bone counts within animation system limits

Performance profiling assesses: - Asset impact on frame rates under realistic gameplay - GPU millisecond costs during rendering - Overdraw visualization and texture streaming analysis

Production Timeline Benefits

The image-to-3D pipeline transforms tactical hero-shooter asset production:

| Traditional Workflow | Image-to-3D Pipeline |

|---|---|

| 2-4 weeks per asset | 2-5 days per asset |

| Manual polygon modeling | Automated reconstruction |

| Limited design iterations | Rapid variation testing |

| Higher production costs | Cost-effective budgets |

Key advantages include: - Digitizing authentic military equipment through photogrammetry - Generating design variations through AI reconstruction - Automated topology cleanup and material texture refinement - Achieving AAA visual standards within competitive budgets

The pipeline’s efficiency enables studios to expand asset variety, providing 50+ unique weapon skins, diverse character customization options, and richly detailed environment props while maintaining competitive development budgets and release schedules for live-service multiplayer titles.

Development teams can now focus resources on gameplay mechanics, balancing, and content updates rather than spending extensive time on manual asset creation, ultimately delivering more engaging experiences for competitive gaming communities.

What Visual Inputs Help Keep Tactical Hero-Shooter Asset Style Consistent?

Visual inputs that help keep tactical hero-shooter asset style consistent include art bibles documenting aesthetic rules, curated reference libraries with 200-500 high-resolution images per category, standardized color palettes with documented hexadecimal values, pillar assets serving as quality benchmarks, and comprehensive style guides specifying technical parameters. These visual inputs create a unified aesthetic framework, ensuring every weapon, character, and prop adheres to the same design language. Critical inputs include documented shape vocabularies, lighting parameters, value hierarchies, and material specifications that guide production from concept through final export.

Establish Your Art Bible as the Visual Foundation

The art bible, created by art directors and lead artists, establishes and governs the aesthetic direction for the entire tactical hero-shooter project by documenting visual identity rules, standardizing technical parameters, and unifying design constraints across the 12-36 month production cycle. You create a 3-5 page “Art Pillar” document during pre-production that outlines core visual identity rules before expanding into comprehensive reference documentation. This framework specifies artistic constraints, stylization approaches, and technical parameters: whether you pursue photorealistic military precision like Counter-Strike 2, stylized cel-shaded graphics, or grounded tactical aesthetics.

Valorant, the tactical 5v5 hero-shooter developed by Riot Games and released in 2020, exemplifies through production implementation how a well-executed art bible successfully balances grounded tactical realism while integrating strong stylization across multiple playable agents, firearms, equipment, and competitive map environments.

You document polygon budgets (typically 15,000-25,000 triangles for hero weapons, 30,000-50,000 for playable characters), texture resolutions (2K for weapons, 4K for hero characters), material properties (metallic/roughness workflows), and shader applications to ensure seamless engine integration. The art bible includes:

- Silhouette requirements

- Surface detail density limits

- Prohibited visual patterns that would break style cohesion

Curate Reference Libraries for Concrete Visual Anchors

3D artists, texture artists, and asset creators systematically curate and organize reference libraries comprising 200-500 high-resolution photographs per major category, depicting:

- Tactical gear (vests, helmets, pouches)

- Military equipment

- Firearms (assault rifles, pistols, SMGs)

- Fabric textures

- Architectural elements

PureRef, a specialized reference management software application designed for artists, facilitates the arrangement and management of reference image collages on an infinite canvas, delivering real-time access to visual anchors during the 3D geometry creation phase (modeling) and surface detail application phase (texturing).

For a grounded tactical shooter project drawing aesthetic influence from Apex Legends (the battle royale hero-shooter developed by Respawn Entertainment in 2019), 3D artists and texture artists prioritize documentation of:

- Weathered metal surfaces (showing oxidation, scratches, and dents)

- Polymer surface variations (synthetic composites used in modern firearms)

- Realistic wear-and-tear patterns (edge wear, contact points, environmental exposure)

| Asset Type | Material Category | Stylistic Function |

|---|---|---|

| Weapons (firearms and melee weapons) | Metals (steel and aluminum) | Hero assets (15,000-50,000 triangle budgets) |

| Equipment (tactical gear) | Fabrics (nylon and canvas) | Background props (reduced detail) |

| Environments (maps and level geometry) | Composites | UI elements (interface design) |

ArtStation, an online portfolio and community platform founded in 2014 hosting work from millions of artists, hosts and delivers high-quality reference material (images, tutorials, production breakdowns) created by professional concept artists (visual development specialists) and professional 3D modelers (digital sculptors and technical artists).

Reference libraries comprise and feature annotated examples (images with text callouts, arrows, and technical notes) documenting and explaining specific material behaviors and visual characteristics:

- Illustrating how light reflects off anodized aluminum surfaces (aluminum treated with electrolytic passivation, exhibiting metallic values 0.9-1.0 and roughness 0.3-0.5)

- Demonstrating how fabric deforms and folds under tension (critical for realistic character clothing and equipment straps)

- Detailing how carbon fiber weave patterns (distinctive 2x2 twill or plain weave structures) appear at different detail scales from macro to micro levels

Define Color Palettes for Faction Recognition and Visual Harmony

Art directors, color designers, and UI/UX specialists develop and standardize color palettes (documented systems including primary, secondary, and accent colors with hexadecimal values and HSV ranges for each faction and character class), guaranteeing both visual harmony and gameplay readability.

Color schemes receive comprehensive documentation:

- Primary color schemes (dominant colors covering 60-70% of surface area)

- Secondary color schemes (supporting colors covering 20-30%)

- Accent color schemes (highlight colors covering 10-20%)

Each faction, character class, and environmental biome specifies exact hexadecimal values:

#2C3E50[RGB: 44, 62, 80] for tactical blue themes#E74C3C[RGB: 231, 76, 60] for enemy highlights and danger indicators

Color designers implement the 80/20 distribution principle (derived from the Pareto principle in design theory):

- 80% of surface area utilizes neutral base colors (desaturated colors with 10-30% saturation including grays, earth tones, and muted blues)

- 20% allocates to saturated accents (highly saturated colors with 60-90% saturation including bright team colors and ability indicators)

Valorant exemplifies sophisticated color palette management through character design, guaranteeing that each agent maintains recognizability through distinct silhouettes and unique color signatures even during chaotic combat scenarios.

Color validation sheets demonstrate palette application across different lighting conditions:

- Daylight (natural outdoor illumination at 5500K color temperature with 100,000 lux intensity)

- Indoor artificial lighting (interior electric lighting at 3000K color temperature with 500 lux intensity)

- Night vision modes (low-light rendering using monochrome green display)

Faction-specific palettes apply complementary color theory:

- Blue-gray ranges (cool-temperature colors with Hue 200-220° and Saturation 15-30%) to defenders

- Warm earth tones (warm-temperature colors with Hue 20-40° and Saturation 25-40%) to attackers

Build Pillar Assets as Quality Benchmarks

Art directors, lead artists, and production managers identify and designate pillar assets (benchmark-quality 3D models such as hero weapons and main characters) during early production, allocating additional time (40-120 hours per asset compared to standard production timelines) and artistic refinement.

These benchmark assets exemplify:

- Complete material definition (comprehensive PBR texture maps including albedo, metallic, roughness, normal, and ambient occlusion)

- Proper polygon distribution (strategic geometry allocation concentrating edge loops at deformation points)

- Optimal texture density (512 pixels per meter pixel-to-world-space ratio)

- Correct value structure (30% dark values, 50% mid-tones, 20% highlights)

- Perfect shape language application (consistent geometric vocabulary)

A tactical assault rifle serving as a pillar asset demonstrates critical material behaviors:

| Component | Material Type | PBR Values |

|---|---|---|

| Metallic receivers | Anodized aluminum | Metallic 0.9-1.0, Roughness 0.3-0.5 |

| Polymer grips | Synthetic composite | Metallic 0.0, Roughness 0.4-0.6 |

| Wear patterns | Contact points | Concentrated at grip surfaces, charging handles |

Lead artists develop visual reference sheets including:

- Orthographic views (parallel projection technical drawings)

- Material breakdowns (detailed PBR map assignments)

- Wireframe overlays (polygon topology structure visualization)

- Texture maps at 4K resolution (4096x4096 pixel dimensions)

Document Shape Language for Intuitive Gameplay Communication

You establish shape vocabularies using geometric forms to communicate character roles, faction affiliations, and functional properties:

- Defensive tank characters use rectangular silhouettes and horizontal emphasis suggesting stability and protection

- Agile flanker characters use triangular forms and diagonal lines conveying speed and aggression

This visual grammar ensures players identify character functions through silhouette recognition at 30-meter distances without reading nameplates. Shape language principles apply hierarchically:

- Overall character silhouettes use primary geometric forms (circles for support, triangles for damage dealers, squares for tanks)

- Secondary forms define equipment categories (rounded magazines for standard weapons, angular magazines for high-capacity variants)

- Tertiary details provide visual interest without compromising readability

Apex Legends character design demonstrates effective shape language implementation, where each Legend maintains distinct silhouettes recognizable during high-speed combat and varied environmental conditions.

Develop Mood Boards for Emotional Tone Alignment

You curate mood boards establishing emotional tone and atmospheric direction that permeates the visual experience. Collections include:

- Imagery samples

- Color swatches

- Typography references

- Artistic inspiration capturing intended player emotional response

Platforms like Miro facilitate collaborative mood board creation, allowing team-wide artistic synthesis and feedback. You create separate mood boards for different gameplay contexts:

- Pre-match lobbies emphasize clean UI and character presentation

- Combat environments balance visibility with atmospheric immersion

- Post-match screens celebrate achievement through dynamic composition

Mood boards include 30-50 curated images per context, annotated with specific elements to extract (lighting approaches, composition techniques, color relationships).

Establish Style Guides with Actionable Production Standards

You document style guides translating aesthetic vision into actionable production specifications. This operational manual specifies:

| Asset Type | Polygon Count | Texture Resolution |

|---|---|---|

| Sidearms | 5,000-8,000 | 1K |

| Primary weapons | 12,000-18,000 | 2K |

| Melee weapons | 20,000-30,000 | 2K |

| Hero characters | N/A | 4K |

Normal map baking parameters: - Cage offset: 0.01-0.05 units - Ray distance: 0.1 units

Material shader configurations: - PBR metallic/roughness workflow - Albedo values: 50-240 sRGB

Value structure principles ensure assets maintain clear focal points through contrast:

- Primary focus areas use 70-80% value range

- Secondary elements use 40-60%

- Tertiary details use 20-40%

Material application rules define surface types for asset categories:

- Weapons use anodized aluminum (metallic 0.9-1.0, roughness 0.3-0.5)

- Polymer grips (metallic 0.0, roughness 0.6-0.8)

- Worn steel (metallic 0.8-0.95, roughness 0.4-0.7)

The style guide includes prohibited techniques:

- Excessive polygon density on unseen surfaces

- Texture resolution mismatches creating visual inconsistency

- Unauthorized shader variations

Conduct Lighting Studies for Visibility and Mood Balance

You define lighting parameters through comprehensive studies demonstrating asset appearance under varied conditions. Baseline scenarios include:

- Direct sunlight (5500K color temperature, 100,000 lux intensity)

- Overcast daylight (6500K, 20,000 lux)

- Indoor artificial lighting (3000K, 500 lux)

- Night operations (moonlight 4000K, 0.25 lux plus artificial sources)

Studies specify illumination intensity ratios:

- Key light: 100%

- Fill light: 30-50%

- Rim light: 70-90%

Additional parameters:

- Ambient occlusion strength: 0.3-0.5 multiplier

- Shadow softness: penumbra angle 2-5° for hard shadows, 10-20° for soft

- Atmospheric fog density: 0.001-0.01 per unit distance

Tactical shooters demand high visibility for competitive fairness, requiring you to balance atmospheric mood with target identification at 50+ meter engagement distances.

You create lighting reference renders of pillar assets showing material responses:

- How anodized surfaces catch rim lighting

- How fabric responds to ambient occlusion

- How transparent materials refract environmental lighting

Implement Style-Syncing Processes for Continuous Quality Control

You establish style-syncing as a continuous validation process, cross-referencing new assets against the art bible and pillar benchmarks. Regular review sessions validate:

- Geometric proportions using overlay comparison tools revealing 2-5% proportion discrepancies

- Surface detail density through texture density analyzers ensuring 512 pixels/meter consistency

- Material application accuracy via shader network audits

- Aesthetic alignment through side-by-side pillar comparisons

You use material validation scripts verifying PBR value ranges:

- Albedo luminance: 30-240 sRGB

- Metallic: 0.0 or 0.9-1.0

- Roughness: 0.2-0.9

Automated checks flag assets exceeding:

- Polygon budgets (weapons over 25,000 triangles, characters over 50,000)

- Texture budgets (combined maps exceeding 4K equivalent)

- Draw call limits

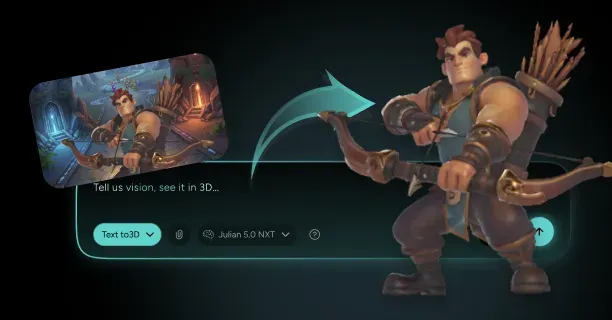

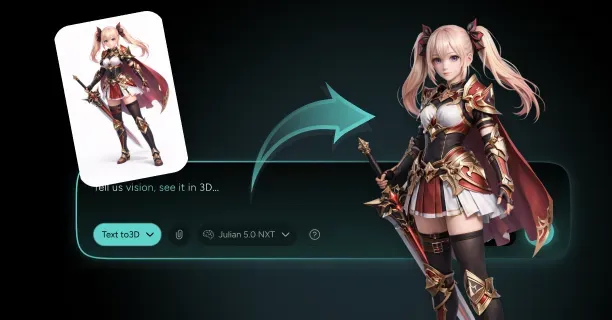

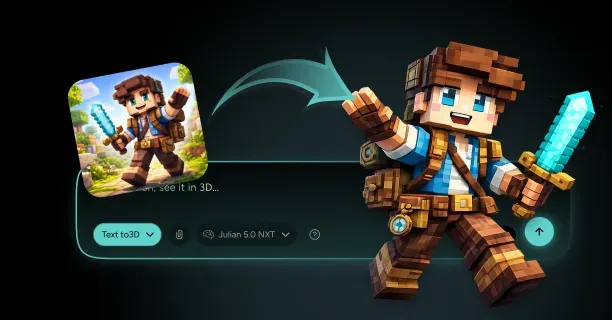

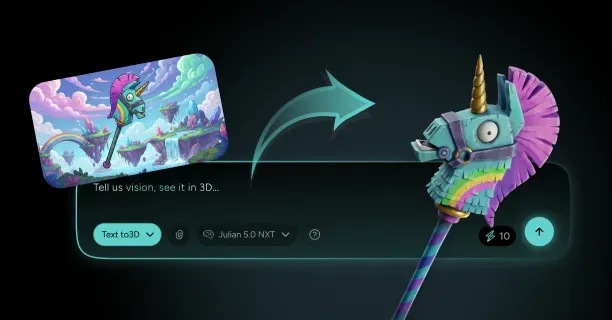

Leverage AI-Powered Consistency Through Image-to-3D Workflows

Our AI analyzes curated visual inputs: art bible documentation, pillar asset geometry, reference library compositions, and lighting study parameters to generate consistent tactical hero-shooter 3D assets from reference images. The platform extracts geometric patterns from pillar assets, learning shape language principles (angular forms for aggressive designs, rounded forms for defensive equipment) and stylistic preferences that define visual identity.

You upload reference images for new weapon designs, and our system cross-references them against style guide parameters, automatically adjusting:

- Polygon distribution to match pillar benchmarks (concentrating geometry at functional areas, reducing density on flat surfaces)

- Material properties to conform to documented PBR ranges

- Geometric proportions to maintain faction-specific design language

This AI-assisted style-syncing accelerates production timelines by 60-80% compared to manual modeling workflows while maintaining consistency across hundreds of assets, offering creative control through parameter adjustment and dramatically reducing the 40-120 hour modeling time for hero-quality tactical shooter weapons to 2-6 hours including refinement iterations.