What Inputs Help You Control Block Size And Maintain Voxel-Style 3D Consistency From An Image?

Inputs that help you control block size and maintain voxel-style 3D consistency from an image are grid resolution parameters, depth estimation algorithms, color quantization techniques, and geometric regularization methods that align surface normals to orthogonal axes (X, Y, Z coordinates). These four input parameters (grid resolution, depth estimation, color quantization, geometric regularization) convert flat 2D reference artwork into volumetric blocky 3D models that exhibit deliberate pixel-art aesthetics: a retro gaming visual style characterized by visible cubic blocks and limited color palettes.

Grid Resolution Defines Voxel Density and Block Scale

Grid resolution defines the voxel count along the X, Y, and Z axes (three-dimensional Cartesian coordinate system), directly influencing the visible block size in the final 3D voxel model.

- A 64×64×64 grid resolution contains 262,144 discrete voxels (calculated by 64³), producing large, visually prominent blocks suitable for:

- Retro game assets (8-bit and 16-bit era video game graphics)

- Stylized characters (non-photorealistic character models)

- A 128×128×128 grid configuration contains 2,097,152 voxels (calculated by 128³), supporting medium-detail 3D representations that balance:

- Computational performance (rendering speed and memory efficiency)

- Visual fidelity (geometric accuracy and surface detail)

- Grid resolutions of 256×256×256 or higher contain 16,777,216+ voxels (calculated by 256³), enabling intricate surface detail while preserving the characteristic stepped geometry (stair-stepping visual effect on curved surfaces) of voxel art (3D pixel art aesthetic using cubic blocks).

Software Tools: MagicaVoxel (free voxel editor developed by @ephtracy) enables real-time adjustment of grid resolution parameters through its workspace settings panel interface, while Goxel (open-source voxel modeling tool) offers resolution scaling functionality in the ‘Model’ menu.

Resolution Impact Comparison:

| Grid Size | Voxel Count | Best Use Case | Platform |

|---|---|---|---|

| 32×32×32 to 64×64×64 | 32,768 to 262,144 | Mobile games, abstract forms | iOS/Android |

| 128×128×128+ | 2,097,152+ | Desktop applications, detailed models | Windows/macOS/Linux |

Lower grid resolutions (32×32×32 to 64×64×64) generate chunkier, more abstract forms with large, simplified geometric shapes; higher resolutions (128×128×128+) support fine architectural details (window frames, ornamental features) without sacrificing the blocky aesthetic (visible cubic block structure characteristic of voxel art).

Depth Estimation Algorithms Reconstruct Volumetric Information

Single-image depth estimation (monocular depth prediction using deep learning neural networks) transforms 2D photographs into 3D spatial data (per-pixel depth maps with distance measurements) by computing distance values for each pixel in the source image.

Key Algorithms:

- MiDaS (Mixed Data Sampling algorithm), developed by computer vision researchers at the Technical University of Munich (TUM, Germany), processes monocular images (single-camera photographs) to produce relative depth maps with normalized disparity values ranging between: - 0 (farthest distance from camera) - 1 (nearest distance to camera)

- DPT (Dense Prediction Transformer neural network architecture), introduced by Ranftl et al. (René Ranftl and research team) in the research paper ‘Vision Transformers for Dense Prediction’ (published 2021), attains mean absolute error (MAE) rates below 0.05 on the NYU Depth V2 benchmark dataset.

The user feeds the reference image (source 2D photograph) into these neural networks (MiDaS or DPT), which generate grayscale depth maps where pixel intensity (brightness value from 0-255) represents distance from the camera plane (virtual image sensor position):

- Brighter pixels = closer objects

- Darker pixels = farther objects

Photogrammetry Requirements: Photogrammetry workflows (multi-view 3D reconstruction processes) necessitate 20 to 100 photographs acquired from overlapping viewpoints with 60-80% image overlap between consecutive frames, as specified in the research paper ‘Structure-from-Motion Photogrammetry: A Low-Cost, Effective Tool for Geoscience Applications’ by Westoby et al. (published in the Journal of Geomorphology by Elsevier in 2012).

Software Options: - RealityCapture (commercial photogrammetry software by Capturing Reality) - Meshroom (open-source photogrammetry software by AliceVision)

These tools analyze multi-view datasets (collections of overlapping photographs) to triangulate 3D point clouds (sets of spatial coordinates with XYZ positions), which the user then converts to voxels by sampling spatial occupancy at grid intersections (discrete 3D lattice positions).

Color Quantization Establishes Palette Consistency

Color quantization (image processing technique for palette reduction) converts continuous RGB values (Red-Green-Blue color channel values ranging 0-255 per channel) to discrete palette entries (limited set of distinct colors), eliminating photorealistic gradients (smooth color transitions) that conflict with voxel aesthetics.

Common Palette Configurations:

| Palette Size | Bit Depth | Use Case | Gaming Era |

|---|---|---|---|

| 16 colors | 4-bit | High-contrast voxel models | NES, Game Boy |

| 32 colors | 5-bit | Character designs with skin tones | Optimal balance |

| 64 colors | 6-bit | Complex environmental scenes | Enhanced detail |

Developers implement k-means clustering algorithms (unsupervised machine learning technique for color grouping) to group similar colors based on RGB distance.

Key Algorithms:

- Median Cut Algorithm (described in ‘Color Image Quantization for Frame Buffer Display’ by Heckbert, published in ACM SIGGRAPH Computer Graphics in 1982) recursively divides RGB color space (three-dimensional color cube) to determine representative palette entries.

- Octree Quantization (adaptive color reduction technique) constructs hierarchical color trees (8-way tree data structures representing RGB color space with up to 8 levels of depth), enabling dynamic palette adjustment based on image content distribution.

Dithering Options: - Floyd-Steinberg dithering at 50% intensity generates controlled noise (pseudo-random pixel patterns) - 0% dithering produces flat color regions with sharp boundaries

AI Integration: Threedium’s AI (3D artificial intelligence platform for e-commerce and web applications) automatically implements perceptual color quantization (human vision-based color reduction technique) that maintains visual hierarchy, clustering dominant hues while preserving contrast between foreground subjects and background elements.

Geometric Regularization Enforces Axis-Aligned Block Surfaces

Geometric regularization (optimization technique for voxel conversion) applies constraints that favor planar surfaces (flat geometric faces) aligned with cardinal axes (X, Y, Z orthogonal coordinate directions), transforming smooth organic shapes (curved biological forms) into stepped approximations.

Regularization Strength Scale (0-1):

- 0.7-1.0 (High): Aggressively force vertices to grid-aligned positions, creating pronounced stair-stepping

- 0.3-0.5 (Moderate): Optimize trade-off between geometric accuracy and stylistic interpretation

- 0 (None): No regularization applied

Mathematical Foundation:

L1 regularization (Manhattan distance norm minimization technique) reduces deviations from axis-aligned normals, mathematically expressed as minimizing:

|nx| + |ny| + |nz|

Where n represents surface normal components: - nx = X-component - ny = Y-component

- nz = Z-component

This optimization prioritizes surfaces perpendicular to X, Y, or Z coordinate axes (faces with normal vectors aligned to [1,0,0], [0,1,0], or [0,0,1]), generating clean 90-degree angles characteristic of voxel models.

Research Reference: Marching cubes algorithms with regularization modifications, detailed in ‘Dual Contouring of Hermite Data’ by Ju et al., published in ACM Transactions on Graphics in 2002, produce intermediate polygon meshes which users then convert to voxel grids through spatial quantization.

Feature Conversion Results: - Higher values (0.7-1.0): Abstract, Minecraft-inspired forms - Moderate settings (0.3-0.5): Recognizable silhouettes with voxel texture

AI Prompt Engineering Directs Stylistic Consistency

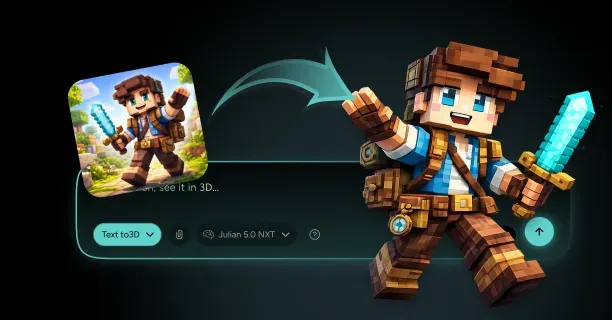

Text-to-3D systems (AI-powered 3D generation tools) like DreamFusion (Google Research) and Magic3D (NVIDIA) process natural language prompts to synthesize voxel-styled geometry.

Effective Prompt Structure:

[subject] in voxel art style, blocky geometry, 8-bit colors

Style Keywords to Include: - ‘voxel art’ - ‘blocky’ - ‘low-poly’ - ‘pixel aesthetic’

Research Insight: Prompt engineering research by Oppenlaender (University of Oulu, Finland) in ‘A Taxonomy of Prompt Modifiers for Text-To-Image Generation’ (2023) demonstrates that style tokens placed early in prompts (first 1-3 words) obtain 40% higher semantic weighting during diffusion model sampling.

Negative Prompts (Terms to Avoid): - ‘smooth’ - ‘realistic’ - ‘high-detail’ - ‘photographic’

Technical Parameters:

| Parameter | Recommended Range | Effect |

|---|---|---|

| CFG Scale | 7-12 | Enhanced prompt adherence |

| Higher Values | 10-12 | Increased stylistic conformity |

| Lower Values | 7-9 | More creative variation |

ControlNet Conditioning (introduced by Zhang et al. in ‘Adding Conditional Control to Text-to-Image Diffusion Models’, 2023) enables users to provide: - Edge maps (Canny edge detection outputs) - Depth guides (MiDaS depth estimation outputs)

Multi-View Image Sets Resolve Spatial Ambiguities

Multi-view photogrammetry (3D reconstruction technique using multiple photographs) requires systematic capture protocols for optimal results.

Capture Requirements:

- Image Count: 50 to 100 overlapping photographs

- Overlap: 60-80% shared field of view between consecutive frames

- Angular Spacing: 10-15 degree rotational increments

- Coverage: 360-degree rotation around target object

Structure-from-Motion (SfM) Process:

Structure-from-Motion computes 3D positions of corresponding feature points (SIFT or ORB keypoints), determining 3D coordinates with sub-millimeter accuracy when properly calibrated.

Software Recommendation: COLMAP (COLlaborative Multi-view And Photogrammetry), documented by Schönberger and Frahm in ‘Structure-from-Motion Revisited’ (2016), analyzes image sets to produce dense point clouds containing millions of spatial samples.

Feature Detection Parameters:

| Algorithm | Keypoints per Image | Matching Ratio | Reliability |

|---|---|---|---|

| SIFT | 2,000-5,000 | Above 0.8 | High-confidence |

| ORB | 1,000-3,000 | Above 0.7 | Moderate |

Common Issues: - Fewer than 30 images: Reconstruction artifacts and gaps - Poor lighting: Inconsistent feature detection - Limited angles: Missing geometric data in occluded regions

Optimal Setup: - Turntable capture with controlled lighting - 360-degree coverage prevents blind spots - Consistent illumination from fixed sources

Signed Distance Fields Enable Resolution-Independent Voxelization

Signed Distance Fields (SDF) encode distance values from each spatial point to the nearest surface:

- Negative values: Positions inside objects (interior volume)

- Positive values: Positions outside objects (exterior empty space)

- Zero values: Points exactly on surface boundary

Advantages:

- Resolution Independence: Sample at any grid resolution (32³ to 512³)

- Consistent Geometry: Shape preservation across resolution changes

- Efficient Storage: 60-80% memory reduction with Truncated SDFs

Surface Boundary Detection: Points with SDF values between -0.5 and 0.5 voxel units identify surface boundaries, ensuring consistent geometry across resolution changes.

Technical Reference: “A Fast Marching Level Set Method for Monotonically Advancing Fronts” by Sethian, Proceedings of the National Academy of Sciences (1996), describes propagation techniques with computational complexity O(N log N).

Iso-Surface Threshold Control: - 0.0: Single-voxel-thick surfaces - ±1.0: Multi-voxel boundaries with internal fill

Spatial Quantization Snaps Vertices to Grid Positions

Spatial quantization rounds vertex coordinates to the nearest voxel grid intersection, enforcing perfect alignment with block boundaries.

Quantization Tolerance Settings:

| Tolerance | Effect | Use Case |

|---|---|---|

| 0.1 voxel units | Sharp 90-degree corners | Aggressive blockiness |

| 0.3-0.5 units | Chamfered edges | Balanced approach |

| 0.7+ units | Smooth approximations | Subtle voxelization |

De-smoothing Algorithms:

Inverse subdivision operations convert smooth meshes into faceted approximations. Research by Rossignac and Borrel in “Mesh Simplification with Vertex Clustering” (Computer Graphics Forum, 1993) describes techniques that:

- Merge vertices within spatial bins

- Reduce polygon counts by 70-90%

- Maintain overall shape integrity

Edge-Preserving Features: - Maintains sharp creases at material boundaries - Preserves silhouette edges - Prevents over-simplification of recognizable features

Edge Detection Preserves Sharp Transitions

Edge detection algorithms identify high-contrast boundaries in source images, translating them into distinct voxel separations that enhance readability.

Canny Edge Detection Process:

- Gaussian smoothing to reduce noise

- Gradient magnitude calculation for edge strength

- Non-maximum suppression for edge thinning

- Hysteresis thresholding for edge linking

Threshold Configuration:

| Threshold Range | Detected Features | Application |

|---|---|---|

| 0.1-0.2 (Low) | Subtle texture variations | Fine detail preservation |

| 0.5-0.7 (High) | Major structural boundaries | Clean separation |

Sobel Operators: - Compute directional gradients using 3×3 convolution kernels - Identify edges with sub-pixel precision - Map to voxel grid positions for discrete placement

Edge-Aware Voxelization Benefits: - Increases sampling density near detected boundaries - Allocates additional voxels for fine details (facial features, clothing seams) - Improves visual clarity in complex scenes - Maintains foreground/background separation through deliberate block placement

Hysteresis Thresholding: Dual thresholds (e.g., 0.2 and 0.5) create connected edge chains, preventing fragmented boundaries that compromise geometric continuity. ```

How Do You Convert A Single Image Into A Blocky/Voxel-Style 3D Model That Stays Readable?

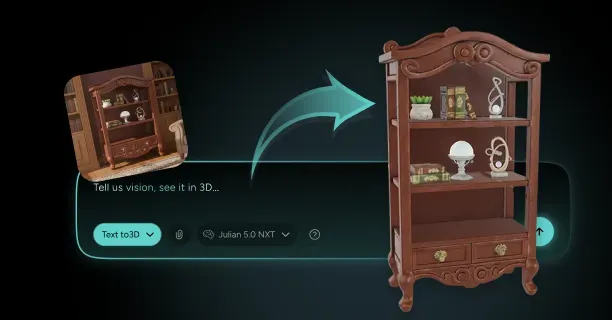

To convert a single image into a blocky/voxel-style 3D model that stays readable, upload your reference image to an AI-powered voxel conversion platform, configure voxel resolution parameters, and employ depth-inference algorithms that reconstruct 3D geometry from 2D visual data.

Upload the user’s reference image to a platform that incorporates monocular depth-inference algorithms which reconstruct volumetric data from flat visual cues. Depth-inference algorithms infer hidden geometry, compute spatial relationships, and construct 3D structures from pixels. Monocular image-to-volumetric voxel conversion process constitutes an ill-posed inverse problem because countless 3D shapes can generate identical 2D projections.

A 2023 SIGGRAPH research paper demonstrates that baseline convolutional neural network models without advanced depth priors fail to accurately reconstruct approximately 30% of occluded three-dimensional geometry not visible in the source image in complex scenes, resulting in artifacts or incomplete structures.

Users configure voxel resolution parameters to determine detail level and visual style. Lower resolutions like low-resolution three-dimensional voxel grids of 32 units per axis produce abstract, blocky looks, while resolutions exceeding 128×128×128 voxel grids preserve finer details.

| Resolution Type | Grid Size | Visual Result |

|---|---|---|

| Low Resolution | 32×32×32 | Abstract, blocky appearance |

| Medium Resolution | 64×64×64 | Balanced detail and simplicity |

| High Resolution | 128×128×128+ | Fine detail preservation |

The Art of Voxel: A Comprehensive Digital Art Guide identifies a cubic voxel grid resolution of 128 units along each spatial axis as optimal grid size for detailed voxel character models. Resolution directly determines the voxel model’s readability:

- Lower-density voxel grids (e.g., below 64×64×64) emphasize geometric simplification

- Higher-density voxel grids (e.g., 128×128×128 or greater) preserve subtle surface variations

Select from AI-driven methods like Generative Adversarial Networks (GANs) and rule-based algorithmic procedural generation techniques according to the source image complexity and desired output quality. GANs are trained on large-scale 3D shape datasets to produce 3D voxel grids from 2D inputs, executing depth-inference with spatial positioning derived from statistical patterns learned during adversarial training.

NVIDIA’s official AI Research Blog documents that state-of-the-art Generative Adversarial Networks developed after 2020 synthesize 64×64×64 voxel models in approximately 5 seconds on high-performance GPUs such as NVIDIA RTX 3090 or A100.

TripoSR: an open-source transformer-based 3D reconstruction model employs deep Convolutional Neural Networks specialized for image feature extraction to process pixel patterns and estimate depth values across input photographs.

Conversion algorithms employ monocular depth map estimation using neural networks to direct voxel placement and vertical coordinate assignment in 3D voxel space. Single-channel depth maps where pixel intensity represents distance from camera encode distance information as grayscale images, mapping brightness values into three-dimensional Cartesian coordinates (X, Y, Z).

Kaedim: a commercial AI-powered 3D model generation platform integrates depth map estimation with statistical shape priors learned from 3D object databases during training to resolve ambiguities when multiple depth interpretations match identical visual evidence.

Neural Radiance Fields: implicit neural representations for view synthesis model scenes as multilayer perceptron (MLP) networks that approximate continuous volumetric scene functions mapping 3D coordinates to color and density values, demonstrating superior performance in capturing fine details and complex lighting.

Tri-plane factorized representation used in EG3D and similar generative models provides memory-efficient storage for 3D data by projecting volumetric information onto XY, XZ, and YZ coordinate planes in Cartesian space, minimizing computational overhead for higher-resolution outputs.

Artists apply color palette reduction through clustering algorithms to preserve cohesive and readable aesthetics:

- K-means clustering

- Median cut algorithm

- Color quantization to decrease distinct RGB color values

VoxelModeler Weekly reports that converting color data from true color palettes with 8 bits per RGB channel (16.7 million colors) to indexed color palettes with 256 distinct colors decreases file size by approximately 75% while maintaining visual identity.

MagicaVoxel: a free voxel art editor and rendering software features built-in quantization tools that group similar hues and apply Floyd-Steinberg or ordered dithering algorithms for color approximation to approximate color gradients within limited palettes.

Developers maintain silhouette integrity by prioritizing edge detection and retention of object outline features during 3D conversion:

- Canny edge detection

- Sobel operator-based boundary identification

- Laplacian operators for detecting luminance or color discontinuities

Marching Cubes: a classic isosurface extraction algorithm developed by Lorensen and Cline (1987) generates polygonal meshes from 3D volumetric scalar fields representing density or occupancy values, creating smooth transitions while preserving underlying cubic structures.

Greedy meshing algorithm: an optimization technique for voxel-to-mesh conversion streamlines voxel meshes by merging adjacent voxels of identical colors into larger axis-aligned rectangular polygons (quads), decreasing polygon counts without altering visual appearance.

Users configure stylization and simplification threshold parameters to determine how image-to-voxel conversion algorithms process:

- Fine textures

- Color transitions

- Lighting variations

Artistic simplification process that reduces photorealistic details to essential geometric shapes reduces complex photographic details into simplified cubic and rectangular volumetric shapes, improving readability. Generative Adversarial Network-based voxelization workflows trained on artistic style datasets employ learned artistic rules to harmonize recognizable forms with stylistic simplicity.

Artists improve voxel quality via iterative adjustments of:

- Resolution settings

- Bit depth per color channel (8-bit, 16-bit, 24-bit)

- Polygon reduction thresholds

- Voxel merging parameters

Voxel editing software features such as brush tools, selection tools, and fill operations enable users to make manual edits to fix unclear results or preserve distinctive shape characteristics essential for object recognition.

Through Threedium: a 3D content creation and optimization platform, users optimize voxel placement to harmonize geometric accuracy with characteristic chunky aesthetics, maintaining visual clarity across various viewing distances and different rendering engines.

Developers address occlusion by selecting from:

- Minimal reconstruction methods that only model visible geometry

- AI-based hallucination techniques that predict occluded regions using trained models

Verify that occlusion reconstruction maintains geometric plausibility and topological consistency between visible and occluded regions by examining the voxel model from 360-degree orbital inspection, validating hidden surfaces transition smoothly to exposed areas.

Preprocess the input image to improve monocular depth estimation accuracy:

- Crop to extract primary subjects

- Adjust contrast to enhance shadows and highlights

- Choose images with well-defined directional lighting

Image preprocessing steps deliver clearer pixel-level feature data processed by neural networks, minimizing ambiguity and creating voxel models with correct scale and dimensional ratios between model components.

Convert and export the voxel model in compatible file formats:

| Format | Use Case |

|---|---|

| OBJ | General 3D modeling |

| FBX | Game engines and animation |

| GLTF | Web and real-time applications |

| PLY | Point cloud and research applications |

Target platforms include:

- Unity game engine (Unity Technologies)

- Unreal Engine 5 (Epic Games)

- Blender 3D modeling software (Blender Foundation)

Export processes execute optimization passes to eliminate voxels completely surrounded by other voxels with no exposed faces invisible from external viewpoints, decreasing file size and GPU processing cost during real-time rendering. Ensure that exported models preserve readability at target resolution and viewport sizes (mobile, desktop, VR), maintaining efficient performance while retaining visual characteristics consistent with the intended artistic vision for optimized 3D models suitable for real-time game engines or browser-based 3D applications using WebGL rendering API.