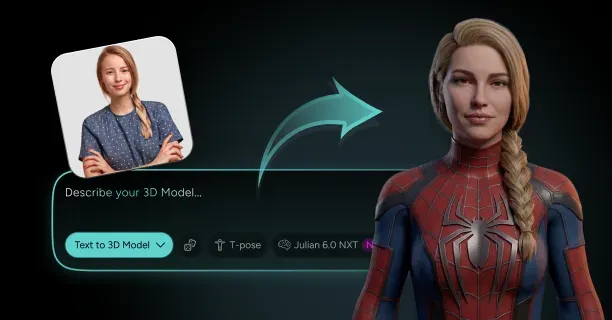

How Do You Turn Yourself Into a Spider-Man-Style 3D Character From a Photo?

To turn yourself into a Spider-Man-style 3D character from a photo, upload a high-resolution frontal facial image (minimum 1920×1080 pixels) to Threedium's AI-powered reconstruction platform above, where convolutional neural networks analyze 68 facial landmarks to construct a polygonal mesh, overlay the classic red-and-blue suit textures with black webbing patterns, enhance muscle definition by 15-20% across shoulders and chest, generate web-slinging poses through inverse kinematics, and export game-ready topology with PBR materials in GLTF 2.0, FBX, or OBJ formats within 1 to 15 minutes.

Upload Requirements

Users should capture a well-lit frontal photograph displaying their entire face without obstructions such as sunglasses, hats, or heavy shadows. Users should position the camera at eye level approximately three feet (approximately 0.91 meters or 36 inches) away to minimize optical aberration and preserve geometric accuracy of facial proportions.

Use natural daylight or evenly distributed indoor lighting to eliminate harsh shadows that interfere with facial landmark detection during Threedium's AI analysis process.

Users should maintain a neutral expression with eyes open and mouth closed, as extreme expressions reduce the AI's ability to accurately map the user's baseline facial structure. Users should remove accessories that obscure facial features to prevent the AI from interpolating missing data, which compromises anatomical accuracy in the user's final 3D character by requiring statistical estimation rather than direct geometric measurements.

Facial Geometry Reconstruction

Threedium's proprietary Julian NXT technology employs convolutional neural networks trained on millions of human face scans to extract depth information from the user's single photograph. The AI detects 68 facial landmarks including:

- Eye corners

- Nose tip

- Mouth edges

- Jawline contours

Deep learning models estimate spatial coordinates of the 68 detected facial landmarks by processing visual features including:

- Shading gradients

- Texture patterns

- Statistical correlations derived through training on datasets

The system constructs a polygonal mesh by interpolating geometry between landmark points with thousands of triangular faces that model continuous surfaces of the user's cheeks, forehead, nose, and chin.

| Traditional 3D Modeling | AI-Powered Generation |

|---|---|

| 20 to 100+ hours | Minutes |

| Requires skilled artists | Automated process |

| Multiple photographs needed | Single photo sufficient |

| Controlled studio environments | No special setup required |

Spider-Man Costume Application

Threedium's Julian NXT 3D generation platform applies texture pattern to the classic red-and-blue suit pattern with black webbing lines extending geometrically across torso, arms, and legs, adhering to design specifications of traditional Spider-Man costume designs from Marvel Comics and Spider-Man film adaptations including:

- Sam Raimi trilogy

- Marc Webb films

- Marvel Cinematic Universe productions

The user's facial features remain recognizable beneath a form-fitting mask that preserves the user's eye shape, nose bridge, and jawline structure while incorporating Spider-Man's signature large white eye lenses measuring approximately 40-50 millimeters (approximately 1.6-2.0 inches) in width.

The system enhances anatomical definition of muscle definition across shoulders, chest, and arms to align with visual standards of superhero body proportions, expanding measurement of shoulder width by 15-20% and improving visual prominence of abdominal segmentation.

These anatomical body modifications maintain recognizability of the user's facial identity while conforming to aesthetic standards of Marvel Entertainment's Spider-Man character design, achieving equilibrium between photorealistic rendering approach maintaining anatomical accuracy and comic book stylization.

Pose Configuration Options

Users can select from preset animation poses to configure spatial arrangement of their Spider-Man-style 3D character in:

- Web-slinging (Spider-Man's signature method of traversal using synthetic webbing)

- Wall-crawling (Spider-Man's ability to adhere to vertical surfaces)

- Mid-air combat stances that demonstrate dynamic movement of superhero action sequences

The rigging system automatically generates a skeletal armature with joints at:

- Shoulders

- Elbows

- Wrists

- Hips

- Knees

- Ankles

Inverse kinematics (IK) calculations ensure maintenance of limb positions to remain within bounds of anatomical realism when repositioning hands for web-shooting gestures or adjusting leg angles for crouching poses, eliminating occurrence of hyperextension or unnatural joint configurations.

Julian NXT's pose generation system calculates and preserves natural weight distribution following center of gravity and biomechanical principles and balance points, ensuring visual presentation of the user's character renders visually as grounded rather than floating even when configured in dynamic action poses.

Game-Ready Topology Generation

Threedium generates optimized structure of clean quad-based mesh topology with polygon density allocated across surface evenly, conforming to standards of game engine performance requirements for real-time rendering (graphics rendering at interactive frame rates of 30-60+ FPS).

| Body Region | Polygon Allocation | Purpose |

|---|---|---|

| Facial Region | 5,000 to 8,000 triangles | Expression details like smile lines and brow furrows |

| Body | 3,000 to 5,000 triangles | Optimize tradeoff between visual quality and rendering efficiency |

Automated UV unwrapping generates parametric mapping creating a two-dimensional texture coordinate map mapping texture coordinates from the Spider-Man costume pattern onto the three-dimensional mesh without stretching or seam artifacts that compromise fidelity of visual quality.

The UV layout partitions geometry into facial features, torso panels, and limb sections into distinct texture islands, enabling optimization through independent resolution scaling for different body regions determined by metric of visibility importance.

This automated game-ready asset generation process removes requirement for manual retopology and UV editing steps that typically require technical artists 8-12 hours to complete.

Export Format Compatibility

Users can export digital asset as their Spider-Man-style 3D character in industry-standard formats supporting file types including:

- GLTF 2.0 (GL Transmission Format 2.0, royalty-free 3D file format by Khronos Group)

- FBX (Filmbox format developed by Autodesk for 3D asset interchange)

- OBJ (Wavefront Object format, widely-supported mesh-only 3D format)

The GLTF export includes material definition with PBR (Physically-Based Rendering) materials with:

- Base color

- Metallic

- Roughness

- Normal maps

FBX files contain embedded data for skeletal rig data and blend shape targets for facial animations, facilitating direct integration into animation software such as:

- Threedium

- Threedium

- Threedium

- MotionBuilder

The OBJ format provides simplified format for lightweight mesh-only option for static display in web viewers or 3D printing applications necessitating file conversion to STL conversion.

Each export maintains consistency of coordinate system orientation and scale units specified during generation, eliminating occurrence of import alignment issues when migrating digital assets between different 3D applications.

These multiple export format options ensure compatibility for the user's personalized Spider-Man avatar to import without technical issues into game development projects, virtual social platforms such as VRChat, Rec Room, or metaverse environments, or AR experiences using ARKit (Apple), ARCore (Google), or WebXR standards without format conversion errors.

Which Suit Pattern, Mask Options, Web Poses, and Body Proportions Make a Spider-Style Version of Yourself Look Right?

Suit patterns that make a Spider-style version of yourself look right include webbing tessellation patterns that maintain visual legibility across curved body surfaces, mask configurations that transform the user's facial structure into heroic geometric representation, web-slinging poses that convey kinetic energy through asymmetrical body language, and proportional systems that elongate the character's limbs to eight head-heights measurement standard while preserving the user's recognizable facial features.

The user's suit pattern design defines the character's visual identity through color blocking techniques that create contrasting color zones, emblem placement that reinforces symbolic positioning, and material finish properties that enhance surface texture appearance.

Mask configuration options control the character's emotional range perception through eye shape geometric design and lens treatment optical properties. Each design decision made during character creation enhances the Spider-character's superhuman capability perception without breaking connection to the user's source photograph anatomical foundation, ensuring that heroic proportion modifications and feature enhancements maintain recognizability to the original user's physical features while expressing enhanced strength, agility, and heroic stature associated with Spider-Man character archetype.

Webbing Tessellation Systems

The designer creates webbing patterns using tessellation density control techniques that maintain visual consistency across high-curvature anatomical areas including:

- Shoulder joints

- Elbow joints

- Knee joints

This prevents visual distortion such as pattern stretching or warping, ensuring that the webbing pattern spacing remains uniform at 2-3 centimeters between intersection points regardless of body surface curvature or mesh topology complexity.

Webbing Pattern Types

| Pattern Type | Characteristics | Spacing | Visual Effect |

|---|---|---|---|

| Classic Grid | Uniform diamond tessellation | 2-3 cm between intersections | Seamless visual flow |

| Organic | Irregular strand thickness | 1-4 mm diameter variation | Biological realism |

| Geometric | Angular faceted patterns | 60-120° vertex angles | Technological enhancement |

The classic grid webbing style, associated with traditional Spider-Man costume designs, utilizes uniform diamond tessellation geometric patterns measuring 2-3 centimeters spacing distance between webbing intersection points, producing seamless visual flow that extends continuously across the 3D character model's curved topology without gaps or distortion.

The 3D artist applies webbing texture mapping using UV coordinate system projection techniques to prevent or minimize texture stretching distortion at joint articulation zones:

- Shoulders

- Elbows

- Hips

- Knees

These anatomical regions experience significant mesh deformation during pose adjustment operations that modify the character's skeletal rig configuration for different body positions, action poses, and animation sequences.

Organic webbing pattern designs mimic biological spider silk structural characteristics with:

- Irregular strand thickness variation ranging from 1-4 millimeters diameter

- Asymmetric branching angles measuring between 15-45 degrees at junction points

- Naturalistic flow patterns that conform to underlying muscle anatomy including:

- Pectoralis major chest muscle group

- Deltoid shoulder muscle group

- Quadriceps thigh muscle group

The geometric webbing design style adopts angular faceted pattern configurations with sharp-edged polygonal surfaces featuring vertex angles measuring between 60-120 degrees at edge intersections.

The Threedium 3D visualization software platform provides intelligent texture mapping algorithms that automatically optimize tessellation density parameters based on computational mesh curvature analysis that evaluates surface geometry complexity at each mesh vertex, maintaining visual pattern consistency across complex topology including curved body surfaces and irregular geometry without requiring manual UV unwrapping technical processes.

Webbing Depth Effects

The 3D artist configures webbing depth visual effects using normal mapping surface detail simulation technique that generates the illusion of 0.5-2 millimeter surface relief without adding geometric complexity, producing micro-shadow effects that enhance the viewer's perception of three-dimensionality and visual realism under dynamic lighting conditions.

Raised webbing design technique benefits:

- Casts micro-shadow effects measuring less than 5 millimeters in extent

- Delineates webbing pattern edges at oblique viewing angles ranging from 15-75 degrees

- Provides viewing-angle-independent visibility advantages

- Maintains pattern clarity across diverse camera positions

Recessed webbing design technique creates:

- Panel division visual effects that segment the suit surface

- Distinct modular sections conveying layered multi-plate armor construction

- Depth channels measuring 1-3 millimeters that separate individual armor plate segments

- Shadow-defined boundaries implying technological reinforcement

The user's webbing pattern line weight parameter determines pattern readability and visual clarity at camera distances beyond the 5-meter threshold.

Optimal line weight selection requires balancing:

- Thin webbing lines (below 1 millimeter width): Become invisible at medium camera shots

- Thick webbing lines (above 4 millimeters width): Appear as structural costume seams

- Recommended range: 1-4 millimeter range for optimal distance visibility

Color Palette Configuration

Select color combinations using HSV values that maintain 60-80% saturation for heroic vibrancy without oversaturating into garish territory.

Classic Color Schemes

The classic red-and-blue colorway, established in Spider-Man's original 1962 comic debut, divides the suit surface into primary color blocks:

Red Color Areas (HSV: 0° hue, 75% saturation, 85% brightness): - Torso region including chest and abdomen - Arm segments including upper and lower limbs - Mask head covering

Medium-dark Blue Color Areas (HSV: 220° hue, 70% saturation, 60% brightness): - Lateral body sides along the ribcage - Leg segments comprising lower limbs - Underarm axillary regions

The red-and-blue color division strategy creates a visual slimming optical illusion by positioning darker blue color values on peripheral body areas.

This creates the V-shaped inverted triangle torso silhouette characteristic of superhero character design:

- Three head-widths (approximately 60-66 centimeters) at shoulder landmarks

- One-and-a-half head-widths (approximately 30-36 centimeters) at waist landmark

Alternative Color Schemes

| Character | Color Scheme | HSV Values | Narrative Concept |

|---|---|---|---|

| Symbiote | Black-and-white | Black: 0°, 0%, 12%; White: 0°, 0%, 95% | Alien organism influence |

| Miles Morales | Black-and-red | Black: 0°, 0%, 15%; Red: 0°, 85%, 90% | Distinct character identity |

| Spider-Gwen | White-pink-black | White: 0°, 0%, 92%; Pink: 320°, 65%, 85% | Individual personality traits |

The black-and-white symbiote colorway features:

- Matte black base color with non-reflective light-absorbing surface finish

- Glossy white spider emblems with reflective light-bouncing surface finish

- Material contrast between matte and glossy surface properties

- Suggestion of extraterrestrial non-human origin

Cultural Pattern Integration

The user incorporates cultural patterns reflecting their ethnic, regional, or national heritage through:

- Geometric motifs such as Celtic knots, African patterns, Islamic tessellations

- Symbolic color associations carrying culturally-specific meanings

- Iconographic elements including culturally-significant animals, plants, symbols

Integration Guidelines:

- Apply patterns at 15-25% opacity transparency level

- Position as subtle background underlays beneath primary webbing

- Create layered compositional structure for visual depth

- Prevent cultural elements from overwhelming core Spider-Man visual language

Your spider emblem placement centers on the sternum at the xiphoid process anatomical landmark with legs extending 8-12 centimeters across pectoral muscles to create bilateral symmetry.

Emblem Specifications:

- Traditional emblems: Eight legs with 45-degree radial spacing

- Stylized versions: Four or six legs with 60-90 degree angular separation

- Scale proportionally to torso width:

- Adult male proportions: 18-22 centimeters horizontally

- Female proportions: 14-18 centimeters horizontally

Mask Geometry and Eye Configuration

Configure mask options using full-coverage geometry that wraps anatomically accurate skull structure:

- 22-24 centimeters from crown to chin

- 14-16 centimeters in width at zygomatic arch landmarks

Your mask's underlying cranial form requires proper eye socket depth positioning lenses 12-15 millimeters from implied eyeball position rather than floating as flat decals on mask surface.

Eye Shape Options

Large teardrop-shaped eyes: - Measurements: 6-8 centimeters height, 4-5 centimeters width - Effect: Suggests approachability

Narrow angular eyes: - Measurements: 5-6 centimeters height with 30-45 degree upward cant - Effect: Projects intensity

Eye Lens Geometry Options:

- Compound curves with 15-25 millimeter radius producing optical depth

- Flat planes creating graphic simplicity for stylized rendering

Eye Treatment Effects

| Treatment Type | Opacity/Index | Visual Effect | Purpose |

|---|---|---|---|

| Reflective | 0.4-0.6 IOR | Environmental lighting bounce | Creates mystery |

| Transparent | 40-60% opacity | Reveals underlying eye shapes | Maintains human connection |

Facial Structure Preservation:

- Cheekbone prominence with 8-12 millimeter zygomatic projection

- Jawline definition following mandibular angle at 110-125 degrees

- Temporal hollowing creating 4-6 millimeter depth variation

Threedium's facial reconstruction algorithms analyze your source photo's bone structure using 68-point facial landmark detection, automatically generating mask geometry that preserves unique proportions.

Mask Construction Details:

- Position seams along anatomical landmarks: mandibular border, orbital rim, nasal bridge centerline

- Panel divisions suggest technological construction with 1-2 millimeter raised edges

- Layered plates overlapping 3-5 millimeters at seam intersections

- Chin and jaw taper smoothly with sternocleidomastoid muscle definition visible as 8-10 millimeter raised bands

Web-Slinging Pose Mechanics

Position web poses using extended arms with fingers spread in two-finger web-shooting gesture:

Hand Configuration: - Index and middle fingers extend fully - Ring and pinky fingers curl to palm at 90-degree metacarpophalangeal flexion

Body Positioning:

- Opposite arm reaches backward 30-45 degrees from shoulder for balance

- Torso twists15-25 degrees creating dynamic asymmetry

- Spine angles in S-curve configuration: - 10-15 degree lumbar lordosis - 5-10 degree thoracic kyphosis

- Pelvis tilts forward 8-12 degrees while chest opens backward

Single-leg extension straightens to 170-175 degrees at knee joint while opposite leg bends to 45-60 degrees, capturing mid-swing kinetic energy.

Wall-Crawling Poses

Configure using hand and foot placement that suggests adhesive grip:

- Fingers splayed at 15-25 degree abduction angles

- Slight pressure deformation visible at contact points measuring 2-4 millimeter surface compression

- Body positioned at 45-90 degree angles defying gravitational orientation

Crouching Poses:

- Compress body into coiled configuration

- Knees drawn toward chest achieving 30-45 degree hip flexion

- Weight balanced on balls of feet at 60-70 degree ankle plantarflexion

- Arms positioned for explosive movement

- Muscular tension visible as 5-8 millimeter surface definition in quadriceps and gastrocnemius

Camera Angle Selection

Heroic Positioning: - Below-horizon positioning15-30 degrees below eye level to emphasize heroic stature - High angles20-40 degrees above to create vulnerability perspective - Extreme foreshortening with limbs extending toward camera at 10-20 degree convergence angles

Your pose's negative space creates interesting shapes occupying 30-40% of compositional area, preventing visual clutter while adding structural strength.

Asymmetrical poses with 20-40 degree rotational offset between shoulder and hip axes suggest motion and narrative progression.

Heroic Proportion Systems

Apply body proportions using eight head-height measurement from crown to heel compared to realistic seven to seven-and-a-half head-height proportions, creating elongation that communicates superhuman capability.

Body Measurement Guidelines

Leg Length Enhancement: - Extend by adding 8-12% height to femur and tibia bones - Preserve ground contact believability while creating long-limbed appearance

Shoulder Width Standards:

| Body Type | Measurement | Head-Width Ratio |

|---|---|---|

| Male-coded | 60-66 centimeters | 3 head-widths |

| Female-coded | 50-55 centimeters | 2.5 head-widths |

Muscle Definition Enhancement:

- Deepen shadows between muscle groups to 15-25% value contrast

- Sharpen form transitions at 30-45 degree angle changes

- Create carved appearance that reads clearly in stylized rendering

Detailed Muscle Specifications

Abdominal Muscles: - Clear six-pack or eight-pack segmentation measuring 3-4 centimeters per rectus abdominis section - Visible external obliques at 10-15 degree diagonal angles - Serratus anterior muscles showing 4-5 individual "finger" projections

Upper Body Proportions:

- Trapezius muscles elevate 2-3 centimeters to create neck power without excessive bulk

- Deltoids round prominently with 8-10 centimeter anterior-posterior depth

- Hands at ninety percent of face height measuring 18-20 centimeters from wrist to fingertip

- Feet at one head-height in length (22-24 centimeters) providing visual foundation

- Waist narrowed to one-and-a-half head-widths (30-36 centimeters)

Threedium's reconstruction algorithms automatically apply heroic proportion adjustments to your source photo's anatomy using skeletal landmark detection.

Automated Enhancement Process:

- Systematically lengthening limbs by 10-15%

- Broadening shoulders by 15-20%

- Defining musculature with 20-30% enhanced surface relief

- Preserving recognizable facial features

Personal Characteristic Retention:

- Facial asymmetry measuring 2-4 millimeter deviation from midline

- Distinctive bone structure with unique zygomatic and mandibular angles

- Proportional relationships making the character identifiably you rather than generic template

Our Julian NXT technology bridges photorealistic reconstruction and stylized character design, applying controlled exaggeration that enhances natural anatomy without replacement.

Why Does Threedium Create Cleaner Spider-Man-Style 3D Characters From a Real Selfie?

Threedium creates cleaner Spider-Man-style 3D characters from a real selfie by employing generative AI models (deep learning algorithms) that reconstruct complete 3D facial geometry (three-dimensional mesh structure) from a single 2D image (flat photograph). Threedium's generative AI approach generates standardized mesh topology (organized 3D vertex structure) with automated UV unwrapping (algorithmic texture coordinate mapping) and optimized polygon counts (efficient vertex density) that traditional photogrammetry (multi-image reconstruction technique) cannot achieve. Threedium's proprietary Julian NXT technology (advanced 3D reconstruction engine) processes user selfies using AI-inferred geometry (machine learning-predicted 3D structure) trained on large-scale facial datasets (millions of diverse face-scan pairs), eliminating the noisy and inconsistent geometry that affects multi-photo reconstruction methods while delivering a skeletal-animation-ready riggable model optimized to under 2MB file size for web performance.

Users upload one frontal selfie (forward-facing photograph) to the generator interface, and Threedium's generative AI model (deep neural network) generates complete 3D reconstruction of the user's entire head geometry (cranial and facial structure) without requiring multiple photographs from different angles. Traditional photogrammetry (multi-view 3D reconstruction technique) requires dozens of images captured under controlled lighting conditions to triangulate depth information (compute surface-to-camera distances), yet still generates noisy mesh surfaces (artifact-laden 3D geometry) with irregular polygon distribution (chaotic vertex organization) that necessitates extensive manual cleanup by 3D artists.

Threedium's single-image 3D reconstruction pipeline (AI-powered processing system) eliminates this computational bottleneck by training deep neural networks (multi-layer machine learning models) on massive datasets containing millions of diverse human faces (varied ethnicities, ages, and anatomies), enabling the neural network to predict occluded facial features (hidden anatomical structures), ear geometry (external ear 3D structure), and cranial curvature (skull surface topology) that a single camera angle cannot photograph directly.

Threedium's AI model (trained neural network) populates geometric gaps (missing surface regions) with statistically probable facial structures (anatomically plausible geometry derived from training data distributions) rather than creating holes or introducing artifacts (geometric errors), outputting a watertight mesh (closed 3D surface without gaps) in a single processing pass without iterative refinement.

Threedium's generative approach eliminates the reconstruction uncertainty that characterizes photogrammetric point-cloud generation, where shadows, reflections, and lens distortion inject geometric errors that propagate throughout the reconstruction pipeline and degrade final mesh quality.

The user's Spider-Man mask (virtual superhero costume overlay) conforms seamlessly to the AI-generated head model (neural network output geometry) because the underlying facial structure preserves anatomically correct proportions (accurate human facial measurements) without the bumps (surface protrusions), divots (surface depressions), or inverted normals (reversed surface orientation vectors) that photogrammetry generates when processing insufficient image data (too few input photographs).

The 3D model that users receive from Threedium's generator features clean mesh topology (organized polygon flow patterns) with edge loops (concentric polygon rings) that align with natural facial muscle groups (anatomical deformation zones), enabling professional riggers (character animation specialists) to attach skeletal armatures (hierarchical bone structures for animation control) without requiring manual retopology (time-intensive mesh restructuring by 3D artists).

Generative AI models (deep learning systems) generate standardized and skeletal-animation-ready mesh structures because they extract optimal polygon flow patterns from training datasets containing thousands of production-ready character models (professionally created game and film assets), encoding industry best practices (professional standards) for deformable geometry (3D surfaces designed for realistic animation deformation).

Each vertex placement (3D point positioning) adheres to a logical grid pattern (structured topology organization) that predicts where animators (character animation specialists) require higher polygon density for expressive facial animation (emotional and speech movements):

- Around the eyes (orbital regions)

- Around the mouth (oral cavity)

- Along the jawline (mandibular edge)

While preserving lower polygon counts across:

- The forehead (frontal cranial region)

- The cheeks (zygomatic regions)

- The neck (cervical area)

Where surface deformation during animation is minimal.

| Region | Polygon Density | Animation Priority |

|---|---|---|

| Eyes & Mouth | High (2000-3000 vertices) | Critical for expressions |

| Forehead & Cheeks | Medium (200-400 vertices) | Low movement areas |

| Neck | Low (100-200 vertices) | Minimal deformation |

Threedium's standardized mesh topology (consistent polygon organization) enables the Spider-Man mask (virtual superhero costume overlay) to deform realistically during speech animation (phoneme-based mouth movements) or emotional expressions (facial muscle activations), with the webbing pattern (costume texture detail) extending and contracting along natural facial contours (anatomical surface curves) rather than exhibiting tearing artifacts (visual discontinuities) or bunching artifacts (unnatural geometry clustering) that indicate poor topology.

Threedium's AI algorithm (neural network texture mapping system) executes automated and artifact-free UV unwrapping (3D-to-2D texture coordinate mapping) that projects the user's facial texture (photographic skin detail) and Spider-Man suit pattern (superhero costume graphic design) onto the 3D mesh (geometric surface) without:

- Stretching (texture distortion from improper scaling)

- Overlapping (UV island intersection)

- Seam misalignment (texture discontinuity at UV boundaries)

UV unwrapping (texture coordinate generation) converts three-dimensional surface geometry (3D mesh coordinates) into two-dimensional texture space (flat UV coordinate grid), and manual unwrapping of complex organic shapes (irregular curved surfaces) like human faces (anatomical facial structures) requires specialized technical knowledge (expert UV artist skills) to minimize distortion (texture stretching and compression) while maximizing texture resolution efficiency (optimal pixel density allocation across UV space).

Threedium's generative model (neural network UV mapping system) extracts optimal UV layouts (best-practice texture coordinate arrangements) during training on professional character models, placing UV seams (texture island boundaries) along natural occlusion boundaries (anatomical regions typically hidden from camera view):

- Behind the ears (posterior auricular region)

- Under the chin (submental area)

- At the hairline (cranial-hair transition)

Where texture discontinuities (visible seams between UV islands) remain invisible during real-time rendering (graphics display process).

The Spider-Man mask's webbing pattern displays crisply across the user's cheekbones and forehead because the UV islands preserve consistent texel density, assigning more texture resolution to high-visibility facial features while reducing resolution for less-important areas like the back of the head.

The resulting 3D model (AI-generated character asset) features an optimized polygon count (strategically reduced vertex density) specifically tuned for web performance (browser rendering efficiency), typically keeping the complete character (full-body mesh with textures) under 2MB file size (two megabytes compressed) to enable instant loading (sub-second asset delivery) in WebAR experiences (web-based augmented reality applications) without requiring app downloads (native application installation), making the technology accessible directly through mobile web browsers.

Generative AI models (neural network geometry systems) learn optimal allocation of polygons (vertex density distribution) during training on professional character models, densifying geometric detail (increasing vertex density) where surface curvature changes rapidly (high geometric complexity areas) such as:

- Around the nose (nasal prominence)

- Eye sockets (orbital cavities)

- Lips (oral aperture)

While employing larger polygons (lower vertex density) across flat or gently curved regions (low-curvature anatomical areas) including:

- The forehead (frontal bone surface)

- Cheeks (zygomatic planes)

- Neck (cervical cylinder)

Maximizing visual quality per polygon invested.

| Performance Metric | Threedium AI | Traditional Photogrammetry |

|---|---|---|

| File Size | Under 2MB | 50-500MB before decimation |

| Polygon Count | 8,000-15,000 | 5-50 million (requires 95-99% reduction) |

| Frame Rate | 60+ FPS | Often under 30 FPS |

| Loading Time | Sub-second | 30+ seconds |

Threedium's intelligent polygon distribution (AI-optimized vertex density strategy) achieves visual fidelity (perceived image quality) comparable to high-resolution scans (photogrammetry or professional 3D scanner outputs) while requiring only one-tenth the vertex count (90% polygon reduction), facilitating smooth real-time rendering (60+ frames per second display) on mobile devices (smartphones and tablets) and web browsers (Chrome, Safari, Firefox) that cannot render dense meshes (high polygon count models exceeding hardware GPU capabilities) at interactive frame rates.

Threedium's AI model (deep neural network for facial reconstruction) learns from a large-scale dataset containing millions of diverse human faces encompassing different:

- Ages (infants to elderly)

- Ethnicities (African, Asian, Caucasian, Hispanic, Middle Eastern, and mixed ancestries)

- Bone structures (skeletal facial architecture variations including jaw width, cheekbone prominence, nasal bridge height)

- Soft-tissue distributions (muscle and fat layer variations affecting facial contours)

Facilitating accurate reconstruction of each user's unique facial geometry (individual anatomical characteristics) rather than imposing a generic template (one-size-fits-all 3D face model) that erases personal features.

Generative adversarial networks (GAN architecture with competing generator and discriminator networks) and diffusion models (iterative denoising neural networks) extract the statistical distribution (probability patterns of feature occurrence and co-occurrence) of human facial features by processing millions of face images (2D photographs) paired with corresponding 3D scans (ground truth depth data from structured light or laser scanning systems), encoding the complex relationships between 2D appearance (photographic pixel patterns) and 3D structure (underlying geometric depth information).

This extensive training (learning from millions of diverse face examples) enables the AI (trained neural network) to detect subtle anatomical cues (barely visible visual indicators of underlying 3D structure) in the user's selfie:

Including the prominence of the user's cheekbones, the width of the user's jaw, the depth of the user's eye sockets, and infer the corresponding 3D geometry with millimeter-level precision.

Threedium's generative AI (robust neural network system) generates standardized and animation-optimized mesh topology (consistent polygon organization with clean edge flow) regardless of:

- Input photo quality (resolution, sharpness, compression artifacts)

- Lighting conditions (illumination direction, intensity, color temperature, including harsh shadows or dim environments)

- Camera angle variations (pitch, yaw, roll deviations up to ±15 degrees from frontal orientation)

Producing professional results (production-ready 3D assets meeting industry standards) from casual smartphone selfies (consumer device photos captured without special equipment, setup, or photography expertise).

Traditional photogrammetry (multi-view reconstruction technique) exhibits extreme sensitivity to input quality variations, where:

- Shadows (lighting occlusions) generate false depth readings

- Specular highlights (bright reflections) disrupt feature detection algorithms

- Off-axis camera angles inject perspective distortion

| Success Rate Comparison | Threedium AI | Traditional Photogrammetry |

|---|---|---|

| Casual Photos | 95%+ | 30-50% |

| Processing Time | 10-30 seconds | 30 minutes to 6 hours |

| Manual Cleanup Required | None | 2-6 hours typical |

Users eliminate the frustration of failed reconstructions (processing attempts producing no usable output) or unusable geometry (3D models with holes, inverted faces, non-manifold edges, or missing surfaces) because Threedium's AI model develops robust feature extraction capabilities (reliable landmark detection under varied conditions) during training on millions of diverse examples.

Threedium's generative model (neural network trained on complete 3D head scans) generates AI-inferred geometry (machine learning-predicted 3D structure) for facial regions that the user's selfie cannot photograph:

- The ears (external auricular structures)

- The back of the head (occipital and posterior parietal regions)

- The underside of the chin (submental area)

By inferring anatomically plausible structures (realistic geometric predictions consistent with human cranial anatomy) based on statistical correlations learned between visible features (frontal facial characteristics observable in the photograph) and typical hidden geometry (average ear size/position relative to face width, typical cranial curvature relative to forehead slope).

Single-image reconstruction (3D generation from one photograph) encounters an inherent geometric ambiguity (fundamental uncertainty where multiple 3D shapes could produce the same 2D image): a photograph contains zero depth information about surfaces facing away from the camera (back-facing geometry with surface normals pointing away from lens), yet a complete 3D model suitable for real-time rendering requires a watertight mesh (closed 3D surface topology with no holes or gaps) with no missing faces (all polygons present to form continuous surface).

Traditional photogrammetry handles occluded regions poorly, either outputting these areas as empty or populating them with placeholder geometry that doesn't match actual user anatomy, producing visible seams when the camera viewpoint exceeds the capture angle range.

Users receive a fully realized Spider-Man character (complete 3D model with continuous geometry covering all surfaces) that displays correctly from every viewing angle (front, side, back, top, bottom, and all intermediate perspectives) because Threedium's AI extracts statistical relationships (learned correlations between anatomical features) between visible and occluded features during training on millions of complete 3D head scans.

Clean topology (organized polygon flow with quad-dominant structure and minimal triangulation) enables a riggable model (3D mesh ready for skeletal armature binding) optimized for character animation, with edge loops (concentric polygon rings) strategically placed to facilitate natural facial deformation (realistic surface movement matching human muscular anatomy) during:

- Speech animation (phoneme-based lip sync and jaw movement)

- Facial expressions (emotion display through muscle activation patterns)

- Head movements (rotation, tilting, nodding controlled by neck joints)

With the polygon flow following the direction of muscle fiber contraction to prevent pinching, tearing, or unnatural bulging artifacts during deformation.

Professional character riggers (technical artists specializing in skeletal animation setup and facial rigging) require specific geometric patterns optimized for deformation:

- Concentric loops (circular edge flow patterns) positioned around the eyes and mouth

- Radial patterns (spoke-like edge flow) radiating from the nose

- Parallel lines (aligned edge flow) extending along the neck

| Topology Feature | Purpose | Benefit |

|---|---|---|

| Concentric loops around eyes | Eyelid closing support | Natural blinking animation |

| Radial patterns from nose | Nostril flaring accommodation | Realistic breathing effects |

| Parallel neck lines | Head rotation enabling | Smooth head movements |

Threedium's generative AI (topology-aware neural network) generates these animation-optimized patterns automatically (without manual artist intervention) because the training dataset contains thousands of production rigs (professionally created character animation setups from AAA games and films), training the model to distinguish which polygon arrangements facilitate smooth deformation and which produce artifacts.

Threedium's generative approach (single-pass neural network inference) bypasses computationally expensive methods including:

- Dense point-cloud generation (computing millions of 3D points through multi-view triangulation, typically requiring 10-30 minutes of GPU processing)

- Iterative mesh refinement (repeatedly optimizing mesh quality through energy minimization algorithms, requiring 5-15 iterations)

- Multi-view stereo matching (finding corresponding feature points across dozens of photographs using SURF algorithms, requiring 5-20 minutes)

That traditional photogrammetry requires, reducing computational cost by 100-1000x and enabling real-time processing on standard cloud servers.

Photogrammetric reconstruction pipelines (multi-stage processing systems like or COLMAP) perform dozens of sequential processing stages:

- Feature detection (identifying keypoints using SURF, or ORB algorithms, 2-10 minutes)

- Camera pose estimation (computing camera position and orientation, 5-20 minutes)

- Sparse reconstruction (creating initial 3D point cloud, 3-15 minutes)

- Dense reconstruction (generating millions of dense 3D points, 10-60 minutes)

- Mesh generation (converting point cloud to triangular surface, 5-30 minutes)

- Texture projection (mapping source photographs onto 3D mesh, 3-15 minutes)

Each stage requiring minutes to hours depending on input photo count (20-200 images) and target quality settings (draft vs. high quality), with total pipeline time ranging from 30 minutes to 6 hours for a single character.

| Processing Comparison | Threedium AI | Traditional Photogrammetry |

|---|---|---|

| Processing Time | 10-30 seconds | 30 minutes to 6 hours |

| Speed Improvement | 100-1000x faster | Baseline |

| Infrastructure Cost | $0.001-0.01 per generation | $3,000-20,000 in software/hardware |

| Technical Expertise Required | None | Specialized 3D reconstruction knowledge |

Users receive their Spider-Man character in seconds (typically 10-30 seconds from upload to download) rather than hours (2-6 hours for photogrammetry) because Threedium's AI model executes a single forward pass (one complete execution without repetition) through a neural network (deep convolutional architecture with 50-200 million parameters), converting the user's 2D selfie (input photograph) into complete 3D geometry (fully formed mesh with UVs and topology) in one operation without iterative refinement loops (repeated optimization cycles that photogrammetry uses).

This computational efficiency facilitates real-time interactive applications where users personalize their Spider-Man appearance and receive output instantly, providing the immediate feedback loops that modern web experiences require to maintain user engagement.