How Do You Turn Yourself Into a Batman-Style 3D Character From a Photo?

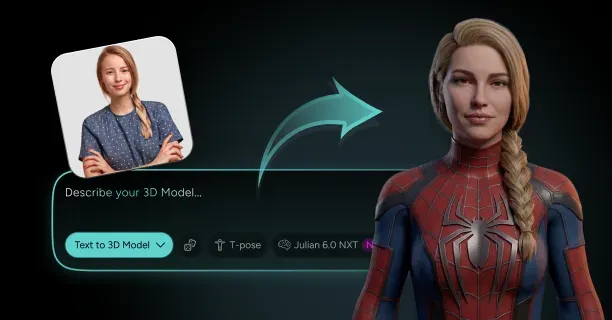

To turn yourself into a Batman-style 3D character from a photo, upload a high-resolution frontal image to Threedium's AI-powered reconstruction platform, which processes facial geometry and applies Batman-specific armor elements to produce a production-ready 3D model in five minutes. The platform uses proprietary Julian NXT technology to construct a base mesh, add cowl and cape elements, and generate stylized textures with minimal user input required.

Photo Requirements

Capture reference photos that give comprehensive facial coverage. AI-powered reconstruction reconstructs a three-dimensional head model from a single photo by extracting geometric data from depth cues, shadows, and facial landmarks.

Traditional photogrammetry workflows require a minimum of 20-30 photos for basic head reconstruction to achieve acceptable levels of mesh accuracy, as documented in photogrammetry software documentation and industry reconstruction guides.

Threedium's platform reconstructs three-dimensional facial structures through deep learning algorithms that learned geometric patterns from thousands of human face topologies from one clear frontal photograph.

Base Mesh Generation

The base mesh comes from reference photos using automated vertex placement that maps key anatomical points:

- Cheekbones

- Jawline

- Brow ridge

- Nasal bridge

The reconstruction engine computationally places vertices at optimal locations, positioning 8,000-12,000 vertices to form the initial facial topology, preserving your unique facial proportions and optimizing mesh structure for Batman-style modifications. You get a clean quad-based mesh structure ready for sculpting operations without artifacts that mess up animation quality.

Digital Sculpting Refinement

Digital sculpting improves anatomical accuracy by adding secondary and tertiary surface details that define your facial features. The platform applies micro-displacement to capture:

- Skin texture variations

- Pore patterns

- Subtle asymmetries

This makes the character recognizably you beneath the Batman cowl.

High-resolution detailing in professional digital sculpts achieves polygon density of 1-20 million polygons, as documented in digital sculpting software documentation.

Threedium's automated sculpting pipeline works within this range, making sure geometric density captures fine facial details while keeping computational efficiency for real-time preview rendering.

Batman Element Integration

Batman-style elements integrate into the base body mesh through parametric asset placement that:

- Aligns cowl geometry with your skull shape

- Positions shoulder armor according to your clavicle width

- Scales chest plating to match your torso proportions

The system analyzes your facial structure to computationally determine optimal cowl fit by measuring:

| Measurement | Purpose |

|---|---|

| Head circumference | Overall cowl sizing |

| Ear position | Cowl alignment |

| Chin projection | Lower cowl fit |

This ensures geometric compatibility between the iconic pointed-ear silhouette and your features. Select from cowl variants including:

- Classic 1989 sculptural design

- Tactical armored 2005 version

- Streamlined fabric-paneled 2022 version

Each automatically fits to your reconstructed head geometry.

Cape Physics System

The cape attachment system anatomically anchors the collar at the location of your C7 vertebra (the prominent bone at the base of your neck) and simulates fabric flow across your shoulder blades using physics-based cloth dynamics.

The platform calculates cape length proportional to your height, typically extending to mid-calf for dramatic visual impact while keeping mobility for action poses. Configure cape stiffness parameters to control whether fabric appears as:

- Heavy military-grade material

- Lightweight tactical weave

With preset options matching specific Batman film aesthetics from Burton's gothic leather to Nolan's memory cloth concept.

Anatomical Armor Placement

Armor plating follows your body's musculature through anatomically-informed placement algorithms:

- Chest symbol sits at the sternum's center, scaled to take up 12-15% of torso width

- Gauntlet fins extend from your forearm's ulnar side

- Utility belt segments wrap around your natural waistline at the iliac crest

Each armor component receives proportional adjustment through custom scaling based on your body measurements computationally derived from analysis of the initial photo. The system predicts body dimensions including:

- Height

- Shoulder width

- Torso length

From facial proportions and implements scaling using industry-standard anthropometric ratios to generate properly-sized Batman gear.

Topology Optimization

Retopology generates animation-optimized topology that constitutes an improved mesh for animation by reconstructing polygon flow of the high-resolution sculpt with clean edge flow aligned with anatomical structure of facial muscle groups and joint deformation zones.

The platform reduces polygon count from the multi-million sculpting phase to 15,000-25,000 faces for the final game-ready character, concentrating geometry density around:

- Eyes

- Mouth

- Jaw

Where animation deformation shows up most visibly. You get quad-dominant topology with edge loops circling eyes and lips, allowing natural facial expressions when the character speaks or reacts beneath the cowl's lower face opening.

UV Mapping Process

UV unwrapping flattens the 3D model surface into a 2D map by cutting strategic seam lines along geometry edges where texture discontinuity stays least visible. The system strategically positions UV seams to minimize visibility along:

- The cowl's back centerline

- Under the cape's collar

- Along armor panel borders

Your facial UV layout takes up 40-50% of the texture atlas, providing maximum resolution for skin detail, while costume elements share remaining UV space with efficient packing that cuts down wasted pixels. The platform generates UV coordinates automatically, applying relaxation algorithms that reduce distortion and keep consistent texel density across all surfaces.

PBR Material Application

PBR texturing applies realistic materials through physically-based rendering workflows that simulate how light interacts with different surface types:

| Component | Material Properties |

|---|---|

| Cowl | Base color with dark gray variations, roughness map, normal map with leather grain |

| Facial skin | Subsurface scattering (1.5-2mm depth) |

| Metal components | Metalness values 0.9-1.0, anisotropic roughness |

Your facial skin implements physically accurate rendering with subsurface scattering parameters calibrated for human dermal translucency, producing the characteristic appearance of the soft glow that visually differentiates realistic living tissue from painted surfaces.

Cape Material Configuration

The cape material combines fabric base color textures with normal maps encoding weave patterns at 2048×2048 pixel resolution. Configure whether the cape appears as:

- Heavy ballistic cloth with prominent cross-hatch weave

- Smooth memory fabric with barely-visible fiber structure

Roughness values range from: - 0.6 for well-worn tactical capes showing surface abrasion - 0.3 for freshly-manufactured materials with protective coating intact

The platform applies ambient occlusion baking that darkens recessed areas where cape fabric bunches against shoulder armor, adding depth perception that grounds the character in three-dimensional space.

Armor Surface Texturing

Armor sections get layered material complexity through multi-channel texture maps. Carbon fiber chest plates use:

- Base metallic value of 0.0 (non-metallic)

- Roughness maps at 0.4-0.5

- Normal maps encoding directional fiber weave pattern

Carbon fiber appears darker when viewed perpendicular to the surface and brighter at grazing angles, a behavior the platform replicates through anisotropic shading calculations.

Leather Component Detail

Leather components, including gloves and boots, get procedural texture generation that adds surface imperfections distinguishing new from battle-worn appearances. Select wear levels from:

- Pristine (minimal surface scratches, uniform color)

- Heavily-used (scuff marks concentrated at stress points, color variation from repeated flexing)

The system places wear patterns anatomically: - Knuckles show more abrasion than palm backs - Boot toes exhibit more scuffing than heel sides

Skeletal Rigging

Rigging creates a digital skeleton for posing by placing joint hierarchies that mirror human skeletal structure. The platform generates:

- Spine chain from pelvis to skull with seven vertebral joints

- Arm chains from clavicle through shoulder, elbow, and wrist to fingertips

- Leg chains from hip through knee and ankle to toe bases

You get a fully-weighted character where mesh vertices bind to nearby skeleton joints with influence falloff. The rig includes facial bones controlling:

- Eyebrow position

- Eyelid closure

- Jaw rotation

- Lip shapes for phoneme formation

Dynamic Cape Rigging

Cape rigging uses a combination of joint chains and dynamic simulation. The system places 12-15 joints down the cape's length from collar to hem, allowing manual posing for static hero shots.

Enable cloth simulation for animated sequences where the cape dynamically reacts to forces:

- Gravity pull

- Air resistance

- Collision detection

Wind force parameters add environmental effects: - Gentle breeze creates subtle cape rippling - High wind values produce dramatic billowing

Pose Constraint Systems

Constraint systems prevent anatomically-impossible poses by limiting joint rotation ranges:

| Joint | Range |

|---|---|

| Elbow | 0-145 degrees (correct direction) |

| Knee | Natural flexion ranges |

| Spine | 30° forward, 20° extension, 25° lateral per segment |

Pose the character through inverse kinematics (IK) that computationally solves for optimal joint angles automatically when you position hands or feet.

Ray-Traced Rendering

Rendering produces the final cinematic image through ray-traced lighting calculations that simulate photon behavior in physical environments. The platform configures a standard three-point lighting setup by default:

- Key light at 45 degrees above and to the side

- Fill light opposite the key softens shadows

- Rim light behind the character generates luminous separation

Adjust light color temperature from: - Warm 3200K tungsten tones (gritty urban atmosphere) - Cool 6500K daylight (outdoor rooftop settings)

Shadow Quality Control

Shadow quality settings control ray-tracing sample counts:

| Sample Count | Render Time | Quality |

|---|---|---|

| 16-32 per pixel | 3-5 minutes per frame | No stochastic noise |

| 4-8 per pixel | Under 60 seconds | Slight grain in dark areas |

The platform applies denoising algorithms that analyze the rendered image and intelligently smooth noise patterns while keeping texture detail. You get real-time viewport preview at 512×512 resolution updating at 15-20 frames per second, then trigger final high-resolution rendering at 4096×4096 pixels for print-quality output.

Atmospheric Effects

Atmospheric effects, including volumetric fog, add environmental context. Enable fog with density controls:

- Light fog (0.01-0.03): Subtle atmospheric haze conveying distance and depth

- Heavy fog (0.1+): High-contrast noir aesthetics

Fog interacts with lighting through scattering calculations, making light beams visible as god rays. Particle systems generate environmental effects:

- Rain droplets hitting the cape surface

- Steam rising from Gotham's sewer grates

Color Grading Options

Post-processing applies color grading that shifts the rendered image's mood through hue and saturation adjustments. Select from preset looks:

- "Dark Knight": Desaturated with blue-gray color cast emphasizing shadows

- "Gothic": High contrast with crushed blacks and amber highlights

- "Animated Series": Saturated primaries with cell-shaded edge detection

The platform implements color transformation using lookup tables (LUTs) that transform through indexed lookup of color values.

Complete Workflow Automation

Threedium's Batman-style character generator handles this entire workflow through the tool above this article:

- Submit a single input image by uploading one frontal photo

- Choose your desired visual style from preferred cowl and armor style

- Click generate

- Receive production-ready output in FBX or GLB format within five minutes

The platform removes the requirement for the time-intensive traditional scan-to-character workflow that traditionally necessitates:

- Specialized photogrammetry equipment

- Weeks of manual sculpting

- Expert knowledge of retopology and UV mapping

Our Julian NXT technology automates processes that previously required vertex placement, texture baking, and rig generation while keeping quality standards professional character artists achieve through manual workflows, making Batman-style 3D character creation accessible for creators at any skill level.

Which Cowl, Cape, Armor, Symbol, and Stance Options Make a Batman-Style Version of Yourself Look Strong in 3D?

Cowl, cape, armor, symbol, and stance options that make a Batman-style version of yourself look strong in 3D are:

- Pointed ears extending 60-80 millimeters vertically

- Ankle-length capes at 85-95% character height

- Continuous chest plates spanning 300-400 square centimeters

- Bat-symbols with 2-4 millimeter positive relief positioned at upper sternum

- Wide stances at 1.2-1.5 times shoulder width with forward weight distribution

Pointed ears extending 60-80 millimeters vertically above the character's skull, inspired by comic book artist Jim Lee's iconic 1990s Batman designs for DC Comics, create a demonic silhouette that enhances threat perception through vertical visual dominance and imposing height.

Shorter tactical ears measuring 30-45 millimeters in vertical extension, inspired by comic book creator Frank Miller's landmark graphic novel 'The Dark Knight Returns' (published by DC Comics in 1986), visually communicate grounded pragmatism and combat readiness through their functional, minimalist design.

The cowl's jawline framing deliberately exposes the character's natural mandible structure (lower jaw bone) to maintain visible facial determination and emotional expressiveness, rather than concealing the bone geometry beneath synthetic protective materials.

A pronounced brow ridge extending 8-12 millimeters beyond the character's eye socket (orbital cavity) creates an intimidation effect through permanent scowling geometry, deliberately eliminating expressive facial range to maintain a constant threat display and aggressive appearance.

Full faceplates eliminate all facial visibility, transforming the character into a dehumanized enforcement mechanism by completely erasing individual identity markers and recognizable human facial features.

Normal map texturing applied to cowl surfaces simulates microscopic material variations including:

- Carbon fiber weaves

- Kevlar aramid threading

- Tactical polymer striations

This is done without adding polygons to the character mesh geometry, thereby maintaining compliance with the 106,000-polygon budget that Rocksteady Studios (British game developer) documented for Batman: Arkham character models during their 2015 technical presentations at the Game Developers Conference (GDC).

Cowl Variations and Facial Coverage

Exposed-jaw cowls maintain 40-60% facial visibility, allowing the character's natural bone structure to display emotional expressions while synthetic protective materials provide upper-face protection and coverage. This exposed-jaw design setup balances human relatability with armored intimidation, enabling viewers to interpret the character's determination through visible jaw tension (masseter muscle engagement) and mouth positioning cues.

Full-coverage cowls completely eliminate all organic facial elements, replacing them with sculpted synthetic expressions that remain permanently fixed, transforming the character into an unchanging symbolic icon rather than a recognizable person wearing protective equipment.

Ear geometry controls silhouette recognition and character identification at distances exceeding 50 meters (approximately 164 feet), where fine surface details become invisible to observers but the overall character shape profile remains readable and recognizable.

Bat-ear length ratios ranging between 1.5:1 and 2.5:1 (calculated as ear height divided by head height) encompass the complete design spectrum from subtle tactical bumps (realistic military aesthetic) to exaggerated demon horns (theatrical comic book aesthetic).

Wider ear spacing, positioning the ears 15-20 millimeters beyond the character's natural skull width, increases horizontal profile dominance, making the character's head appear larger and more threatening when viewed from three-quarter angles (45-degree perspective).

Cape Physics and Attachment Geometry

Cape length determines the design trade-off balance between dramatic visual impact and character mobility in the 3D character model, with longer capes increasing theatrical presence while reducing functional movement capability.

| Cape Type | Length Ratio | Visual Impact | Mobility |

|---|---|---|---|

| Ankle-length | 85-95% character height | Maximum silhouette expansion (200-300% size increase) | Reduced agility |

| Tactical | 50-60% character height | Moderate impact | Enhanced mobility |

Ankle-length capes extending 85-95% of the total character height (measured vertically from shoulder attachment point to ground plane reference surface) maximize silhouette expansion during character movement, creating visual mass that multiplies the character's apparent size by 200-300% when cloth physics simulation spreads the fabric laterally across the horizontal plane.

Tactical capes terminating at knee height (50-60% of total character height) prioritize agility over theatrical visual impact, reducing cloth collision detection complexity in physics simulations and suggesting a combat-first design philosophy that emphasizes functional performance.

Shoulder attachment width artificially broadens the character's physique when configured to 110-120% of the character's natural shoulder measurement (acromial width), creating an enhanced upper-body profile through geometric scaling. This geometric expansion creates an inverted triangle silhouette (wide shoulders tapering to narrow waist) that visually signals physical power and strength through upper-body dominance and enhanced shoulder-to-waist ratio.

Cape attachment points positioned 25-30 millimeters posterior to the character's shoulder anterior edge (front boundary) allow fabric to drape across the character's back without interfering with arm mobility or range of motion, maintaining clean silhouettes during forward-reaching animation poses.

Material stiffness parameters (computational physics variables) determine cape behavior realism and visual believability, controlling how accurately the fabric responds to gravity, wind forces, collision detection, and character movement in the 3D environment:

- Heavy wool material settings (configured with stiffness values between 0.7-0.9 on normalized 0-1 scales) produce slow, deliberate fabric wave motion that conveys material weight and gothic gravitas (dark, serious aesthetic atmosphere)

- Technical fabric parameters (configured with stiffness values of 0.3-0.5 on normalized scales) create sharp, responsive fold patterns that suggest advanced synthetic materials such as tactical nylon, ripstop fabrics, or engineered polymer textiles

Matte black surfaces with 5-10% specular reflection (mirror-like light bounce) dissolve the character's visual boundaries in low-light environments, making precise edge definition impossible for observers to perceive and significantly boosting stealth perception and tactical concealment.

Armor Plating Configuration

Armor panel size visually communicates the protection-versus-agility balance through visual segmentation patterns, with panel dimensions indicating the character's design priorities between defensive coverage and mobility flexibility.

Continuous chest plates spanning 300-400 square centimeters (approximately 46-62 square inches) of surface area suggest heavy ballistic protection against projectile threats, deliberately trading torso flexibility for enhanced defensive capability and impact resistance.

Segmented armor dividing the equivalent surface area into 8-12 articulated plates demonstrates tactical mobility prioritization, allowing the character's torso to bend and twist naturally during combat animations while maintaining protective coverage.

Panel overlap depth of 3-5 millimeters creates shadow-catching gaps that enhance three-dimensional depth perception and surface geometry reading under directional lighting conditions, improving the visual definition of armor layers.

Edge treatment defines threat perception through geometry language, with edge sharpness and profile communicating psychological messages about the character's danger level and aggressive intent through visual design vocabulary:

- Sharp 90-degree armor edges convey aggression and cutting danger through angular geometry, suggesting the character prioritizes offensive combat tactics and aggressive engagement over defensive posturing

- Beveled edges with 2-3 millimeter chamfers (angled edge cuts, typically 45 degrees) indicate sophisticated engineering and precision manufacturing, suggesting controlled violence and calculated tactical application rather than crude aggressive force

- Rounded edges with 5-8 millimeter radii suggest an organic design philosophy inspired by biological forms, softening mechanical harshness and aggressive appearance while maintaining full protective function and structural integrity

Carbon fiber texturing applied to armor surfaces, achieved through albedo maps (color/reflectivity textures) displaying 0.5-1.0 millimeter weave patterns and normal maps creating surface depth illusion without additional geometry, signals modern material science and advanced composite engineering.

Layered armor assemblies with visible under-plating create equipment depth and visual complexity, demonstrating that the character wears multiple integrated protective systems (outer shell, padding, ballistic layers) rather than simple single-layer costume elements.

Threedium's 3D reconstruction platform preserves these material distinctions when users upload reference images, automatically translating photographic surface details into normal-mapped geometry that maintains visual complexity and material definition without incurring additional polygon overhead or computational cost.

Bat-Symbol Placement and Relief

The bat-symbol requires precise sternum (breastbone) positioning to function effectively as a compositional anchor: a focal point that organizes the viewer's visual attention and centers the character's design composition.

Placement at the upper third of the character's sternum, approximately 80-100 millimeters below the sternal notch (jugular notch, the visible dip between collarbones), centers the emblem within the chest mass, creating natural eye-line attraction and balanced visual composition.

| Symbol Width | Chest Breadth % | Aesthetic Style |

|---|---|---|

| 25-35% | Subtle integration | Realistic military/tactical |

| 40-50% | Bold graphic statement | Comic book/superhero |

Relief depth (the three-dimensional projection or recession of the symbol from the armor surface) determines symbol visibility across varying lighting conditions by controlling shadow casting and surface contrast:

- Positive relief of 2-4 millimeters (where the symbol projects outward from the armor surface) creates sufficient shadow-casting for visibility in low-contrast environments without appearing artificially stuck-on or poorly integrated into the armor design

- Deeper relief of 5-6 millimeters produces dramatic shadowing under harsh directional lighting conditions (strong single-source illumination), strongly emphasizing the symbol's three-dimensionality and sculptural presence on the armor surface

- Recessed symbols (negative relief, where the emblem is carved into the armor surface) reverse this shadow relationship, creating inverse shadows that read clearly under overhead lighting conditions but become nearly invisible in flat, diffuse illumination without directional light sources

Material contrast between the bat-symbol and surrounding armor defines thematic allegiance and character philosophy through reflectance relationships (how much light each surface reflects), visually communicating the character's organizational affiliation and operational approach:

- Matte black symbols on satin black armor (with reflectance difference of 15-25% between surface finishes) create sophisticated tonal separation visible primarily through specular highlights (bright light reflections), producing subtle contrast that suggests stealth and understated authority

- Metallic gold symbols on matte black backgrounds (with reflectance difference of 60-80%, representing maximum visual contrast) establish hierarchy and authority through dramatic color separation, signaling that the character operates with institutional backing, official sanction, or organizational resources rather than as an anonymous vigilante working outside established systems

Stance Geometry and Weight Distribution

Stance width (the horizontal distance between the character's feet) establishes stability perception through leg positioning, communicating physical readiness and affecting the visual center of gravity:

- Feet placed at 1.2 times the character's shoulder width create balanced athletic readiness, suggesting the character possesses explosive movement capability and can accelerate quickly in any direction from this stable foundation

- Wider stances at 1.5 times the character's shoulder width demonstrate immovable defensive positioning and grounded stability, deliberately trading lateral mobility and quick movement for rooted power and resistance to displacement

- Narrow stances measuring below 1.0 shoulder width ratio (feet closer together than shoulder distance) appear visually unstable, undermining strength communication and power projection unless deliberately paired with dynamic movement poses that suggest prioritization of speed and agility over raw power

Weight distribution controls perceived readiness and action potential through center-of-mass positioning, with weight placement indicating the character's preparedness to initiate movement and the likely direction of action:

- Forward weight shifts placing 60-70% of body mass over the character's front foot indicate aggressive intent and imminent forward action, suggesting the character is prepared to advance, strike, or engage immediately

- Centered weight distribution (50-50 split between front and rear foot) suggests calm readiness and tactical patience, indicating the character is prepared to respond in multiple directions without committing to immediate action

- Rear weight bias (placing more than 50% of body mass over the back foot) indicates defensive preparation or post-action recovery states, reducing forward threat projection and suggesting cautious engagement or tactical withdrawal

Arm positioning modifies perceived psychological state through limb geometry and body language, with arm placement communicating emotional conditions, threat levels, and the character's openness to engagement:

- Arms held 15-20 degrees away from the character's torso with fists clenched expand the character's silhouette laterally (increasing horizontal profile), while simultaneously signaling contained aggression and readiness to engage physically

- Arms crossed at chest height (with forearms positioned horizontally at 90-degree elbow angles) display closed body language and defensive psychological barriers, while conveying judgmental authority and evaluative superiority over the observed subject

- Arms hanging naturally with 5-10 degree elbow flexion (slight bend, avoiding rigid straightness) suggest relaxed confidence and secure self-assurance, allowing the character's armored physique to communicate power without requiring additional postural reinforcement or aggressive positioning

Shoulder elevation raising the character's shoulder line 10-15 millimeters above neutral anatomical position creates predatory tension and threat perception, mimicking mammalian threat displays observed in species like felines, canines, and primates during aggressive encounters.

Head tilt angling the character's skull 5-10 degrees downward while maintaining eye-line directed toward the viewer creates an imposing 'looking down' psychological effect and perceived height dominance, even when the character stands at equal physical height to the observer. This specific geometric combination (raised shoulders, lowered head position, and maintained direct eye contact) triggers instinctive threat recognition in viewers at a subconscious level, activating primal danger assessment responses evolved for predator detection.

Cape Draping and Environmental Context

Symmetrical cape draping with equal fabric distribution across both shoulders indicates calm control and composed psychological state, suggesting deliberate presentation and intentional staging rather than spontaneous arrival or recent action.

Asymmetrical cape arrangements, gathering 60-70% of cape mass over one shoulder, suggest recent movement and dynamic action history, implying the character just arrived at the scene or maintains readiness to move immediately, with the cape still settling from previous motion.

Wind-blown cape positioning with fabric streaming horizontally adds environmental context and situational information, indicating the character operates in exposed outdoor conditions with active air movement rather than controlled interior environments with still air.

Cloth simulation parameters (computational physics variables) determine cape behavior realism and visual believability, controlling how accurately the fabric responds to gravity, wind forces, collision detection, and character movement in the 3D environment:

- Gravity multipliers ranging between 1.0-1.5 times standard Earth gravity (9.8 meters per second squared, the acceleration constant at Earth's surface) produce heavy, dramatic fabric motion with pronounced downward pull that emphasizes material weight and substantial physical presence

- Damping values of 0.3-0.5 (on normalized 0-1 scales) reduce oscillation amplitude and prevent unrealistic fabric vibration artifacts (excessive back-and-forth motion), while maintaining responsive movement that reacts naturally to forces and character animation

- Collision detection between cape fabric and armor geometry prevents mesh interpenetration (the visual error where surfaces incorrectly pass through each other), maintaining visual believability and physical plausibility when the character moves through animation sequences and dynamic poses

Users configure these design elements, including cowl ear geometry, cape attachment width, armor segmentation patterns, symbol relief depth, and stance width ratios, through Threedium's parameter controls and customization interface when generating their Batman-style 3D character from uploaded reference photos.

Threedium's Julian NXT technology (proprietary AI-powered 3D generation system) analyzes the user's facial structure and body proportions from uploaded photographs, automatically suggesting optimal cowl fit and armor scaling that matches the user's natural geometry while applying Batman-style aesthetic transformations and superhero design conventions.

The Threedium platform maintains consistent form language (unified visual vocabulary and design principles) across all character elements, ensuring the selected cowl design philosophy, whether tactical realism or theatrical stylization, carries through to cape material choices and armor edge treatments, creating cohesive unified character design rather than visually mismatched component assembly.

Why Does Threedium Generate More Detailed Batman-Style 3D Characters From One Photo?

Threedium generates more detailed Batman-style 3D characters from one photo because its proprietary AI platform leverages a hybrid NeRF-GAN architecture that synergistically integrates Neural Radiance Fields (NeRFs) with Generative Adversarial Networks (GANs), enabling single photo 3D reconstruction of Batman-style (DC Comics superhero aesthetic) 3D characters. The NeRF-GAN framework computationally reconstructs complex 3D geometry from a single 2D photo while synthesizing high-quality textures and accurately modeling view-dependent optical effects like specular reflections and material properties. When provided with a front-facing photograph to the platform above, Threedium's system decomposes facial geometry, body proportions, and costume elements through neural networks trained on a superhero reference dataset containing thousands of images, eliminating the multi-angle photography requirement that traditional photogrammetry necessitates.

Neural Radiance Field Architecture

Neural Radiance Fields (NeRFs, introduced by Mildenhall et al., 2020) represent a neural network architecture that learns continuous volumetric scene representations, enabling the synthesis of photorealistic perspectives from minimal input images. Upon receiving a single front-facing photograph, the NeRF component within Threedium's NeRF-GAN framework constructs a 3D spatial map by predicting RGB color values and volumetric density values at arbitrary 3D coordinates. The neural network models the physics of light-surface interaction by analyzing:

- Pixel intensity distribution

- Shadow gradient patterns

- Specular highlight locations in your input image

Then extrapolates illumination information across the full 360-degree viewing sphere. This volumetric reconstruction accurately reconstructs the cowl ear curvature, eye socket depth, and shoulder armor 3D structure without requiring multi-view capture of these elements from multiple perspectives.

The NeRF model encodes user input photographs through coordinate-based neural representation that functionally transforms 3D spatial coordinates and viewing direction angles to RGB color values and volumetric density measurements, with spatial coordinates expressed as Cartesian xyz values and viewing directions expressed as spherical theta-phi angles.

You gain advantage from this approach because the neural network interpolates geometric information between known pixel positions in your source photograph, probabilistically inferring occluded surface geometry like:

- Cape posterior surfaces

- Gauntlet interior surfaces

- Based on learned 3D priors

Threedium's NeRF-GAN framework accomplishes this inference by learning spatial relationship patterns from a training dataset comprising thousands of Batman costume photographs captured from multiple viewing angles, enabling the learned model to predict fabric draping physics, armor segment connectivity, and bat symbol curvature based on front-facing visual features in your single uploaded image.

| Component | Sample Points | Function |

|---|---|---|

| NeRF Volume Rendering | 64-128 points per ray | Compute local color and opacity values |

| Camera Ray Trajectories | Multiple sample points | Pass through input image pixels |

| Volume Integration | Point samples | Produce photorealistic pixel values |

Threedium's Neural Radiance Field implementation samples 64-128 points per ray along camera ray trajectories that pass through input image pixels, querying the neural network at each sample point to compute local color values and local opacity values. When the system integrates point samples through volume rendering integration, the NeRF volume rendering pipeline produces photorealistic pixel values that account for:

- Surface occlusion

- Material transparency

- Light attenuation through semi-transparent materials like cape fabric

You upload a photograph depicting frontal costume details, yet the neural network generates geometrically consistent posterior surfaces because it acquired knowledge of 3D shape patterns from training datasets containing 360-degree turntable sequences (photographic technique where subject rotates on platform while camera remains stationary, capturing all angles) depicting complete Batman costumes.

Generative Adversarial Network Synthesis

Generative Adversarial Networks function as machine learning architecture comprising a generator network and discriminator network that compete to produce realistic synthetic outputs, and Threedium leverages the adversarial training process to synthesize missing costume details that single photographs have the limitation of incomplete surface visibility. The generator network synthesizes texture data representing occluded surface regions not visible in the input photograph, such as:

- Utility belt (Batman's equipment-carrying waist accessory, typically yellow or gold colored) side panels

- Cape interior lining

- Elbow joint articulation points

The discriminator network evaluates generator outputs by comparing against authentic costume references sourced from the training dataset, rejecting non-authentic outputs that diverge from established visual patterns derived from real Batman costumes. The user receives a complete 3D character model because adversarial training iterates thousands of times per second during the generation phase, progressively refining synthetic detail quality until synthetic outputs statistically align with real photograph distribution, ensuring photorealistic quality.

The GAN component specializes in texture synthesis, generating fabric weave patterns applied to cape material, metallic surface patterns applied to armor plates, and leather grain textures applied to glove surfaces derived from observed material properties extracted from illuminated photograph regions.

Upload a frontal costume image showing anterior costume view only, yet the system synthesizes realistic posterior textures applied to cape backside by drawing from learned probability distributions representing fabric behavior patterns that vary under diverse lighting conditions. The discriminator network ensures physical plausibility:

- Synthetic textures must exhibit realistic physical properties

- Cape fabric must display accurate draping physics

- Armor surfaces must show consistent weathering patterns

- Bat symbol edges must display appropriate edge smoothing

Because deviation from realism causes discriminator rejection during the adversarial training loop that maintains photorealistic output standards.

Threedium's Generative Adversarial Network implementation employs progressive growing technique that generates textures at multiple resolution levels:

| Resolution Level | Starting Resolution | Ending Resolution | Purpose |

|---|---|---|---|

| Coarse | 256x256 pixels | - | Macro-scale color distributions |

| Medium | - | 1024x1024 pixels | Mid-level details |

| Fine | - | 2048x2048 pixels | Micro-scale details including stitching patterns |

You gain advantage from this multi-scale generation approach because the generator network learns global color schemes applied to entire costumes and local material variations applied to localized regions, yielding textures that preserve visual consistency across varying viewing distances through level-of-detail coherence.

Hybrid Geometric and Stylistic Processing

Threedium's NeRF-GAN framework synergistically integrates NeRF geometric reconstruction capability with GAN detail synthesis capability, producing a hybrid AI architecture that simultaneously solves dual computational challenges:

- Accurate 3D geometry reconstruction

- High-fidelity texture generation

That single-method approaches cannot simultaneously solve. You obtain geometric accuracy provided by the NeRF component, which maintains:

- Precise facial measurements

- Accurate body proportions

- Correct pose alignment extracted from the input photograph

While preserving anthropometric accuracy. You simultaneously obtain artistic stylization provided by the GAN component, which artistically converts realistic human anatomy into transformation output including:

- Exaggerated musculature

- Dramatic shadow rendering

- Iconic costume elements that define Batman aesthetic style

The hybrid system analyzes user input images through parallel neural pathways: pathway 1 performing spatial relationship reconstruction that captures measurable geometric properties, and pathway 2 performing stylistic transformation application that applies learned aesthetic conventions, then integrates dual pathway outputs into a unified 3D mesh that maintains anatomical correctness and superhero visual language.

This stylistic reconstruction transcends simple geometry extraction, integrating Batman-specific artistic conventions into the core generation process during the generation phase rather than applying them as post-processing filter application. Threedium's NeRF-GAN system learns Batman design conventions from learning sources comprising:

- Comic book artwork

- Film costume designs

- Video game character models

Deriving statistical design patterns that define cowl tapering geometry toward the jaw line, cape gathering mechanics at shoulder attachment points, and armor overlap configuration to suggest protective functionality.

Upload a casual smartphone photograph captured with standard smartphone lighting, and Threedium's NeRF-GAN platform automatically transforms user facial features into translation output exhibiting dramatic contrast lighting and bold silhouette shapes characteristic of Batman design aesthetic, because the GAN component has learned aesthetic design principles through exposure to training data containing thousands of professional Batman designs.

| Region Type | Geometric Fidelity | Stylistic Transformation | Purpose |

|---|---|---|---|

| Facial regions | Higher geometric fidelity | Lower transformation | Preserve recognizable user features |

| Costume regions | Lower geometric fidelity | Stronger stylistic transformation | Match Batman iconic design aesthetic |

Threedium's NeRF-GAN integrated architecture employs attention mechanisms that modulate data contribution ratios, balancing NeRF geometric data and GAN stylistic data determined by local surface properties. You preserve facial identity in the final 3D character output while the character wears Batman costume that exhibits dramatic proportions and professional material qualities matching canonical Batman design with material qualities replicating professional costume fabrication.

Single-Image Inference Capabilities

Traditional photogrammetry necessitates the acquisition of 50-200 photographs that must be captured from systematically distributed angles surrounding the subject 360 degrees, then computationally analyzes these images through Structure-from-Motion (SfM, computer vision technique for 3D reconstruction from 2D image sequences) algorithms that triangulate corresponding pixel positions across multiple image views to reconstruct 3D coordinate positions through time-intensive and labor-intensive processes.

You bypass this time-intensive photogrammetry workflow by using Threedium's platform because the NeRF-GAN system computationally derives spatial geometric information equivalent to parallax-derived data that traditional photogrammetry derives spatial data from parallax measurements requiring multiple camera positions, eliminating the need for multi-angle capture and saving user time.

Threedium's NeRF-GAN platform accomplishes this inference by learning probabilistic anatomical models and probabilistic costume construction models from training datasets, enabling neural networks to predict the most probable 3D surface configurations applied to occluded surfaces not visible in single input photographs.

When capturing a frontal self-portrait, the AI system infers:

- Posterior skull curvature

- Cape draping pattern occurring down user back

- Armor strap connection points crossing the shoulder region

With predictions constrained by biomechanical principles and guided by costume design conventions learned during the training phase.

This single-image reconstruction capability derives from the NeRF component's view synthesis capability to learn generalizable view synthesis (computer graphics technique for generating novel camera perspectives of a scene) functions that generalize across multiple object categories, eliminating the need for per-scene optimization required by traditional NeRF implementations, enabling zero-shot reconstruction.

You leverage transfer learning (machine learning method where knowledge gained from one task is applied to a different but related task), where the neural network transfers learned spatial reasoning patterns from thousands of training examples including:

- Human figure datasets

- Superhero costume datasets

To user specific input images, improving reconstruction quality. Threedium's NeRF-GAN neural network encodes knowledge that:

- Human shoulders possess predictable 3D geometry invariant to viewing angle changes

- Capes obey gravitational draping physics invariant to camera position

- Armor plates maintain consistent thickness measurements invariant to perspective foreshortening

Enabling view-independent reconstruction.

| Method | Processing Time | Speed Improvement |

|---|---|---|

| Traditional Photogrammetry | 2-4 hours | Baseline |

| Threedium's Single-Image Pipeline | 45-90 seconds | 80-320× faster |

The AI system leverages learned geometric constants to reconstruct complete 3D character models from partial visual information, inferring occluded surface regions and extrapolating hidden surface geometry based on statistical probability models, eliminating the need for comprehensive photographic documentation that traditional methods necessitate for multi-angle photographic evidence. Threedium's single-image pipeline achieves full 3D reconstruction within 45-90 seconds compared to 2-4 hours necessitated by traditional photogrammetry workflow, representing 80-320× speed improvement.

You achieve time efficiency because the neural network executes computational inference in a single forward pass processing the entire model rather than iteratively optimizing camera pose parameters and triangulating point correspondences operating across dozens of images.

Material Property Reconstruction

Threedium's technology demonstrates superior performance in high-fidelity texture reconstruction by analyzing light-material interactions visible in input photographs, then propagating material properties across the entire 3D model surface including non-visible surfaces that the camera did not capture, maintaining photorealistic quality. The NeRF component acquires models of bidirectional reflectance distribution functions (BRDF, mathematical function describing light reflection properties of surfaces in computer graphics) that mathematically characterize light scattering behavior varying by material type:

- Matte fabric exhibits uniform light absorption and diffuse light scattering

- Metallic armor produces specular highlights occurring at specific reflection angles

- Leather material exhibits subsurface scattering creating subtle translucency effects

Enabling physically-accurate rendering.

Upload an indoor-lit photograph captured under standard indoor lighting, and the system generalizes material behavior models to predict appearance under varied lighting applied to user Batman costume, because the neural network has encoded surface-light mathematical relationships relating surface properties and light transport physics from learning sources spanning multiple lighting environments.

The texture reconstruction pipeline extracts color information and normal map (texture encoding surface orientation information for lighting calculations in 3D graphics) data from visible photograph regions, then uses the GAN component to synthesize believable texture continuations filling occluded surface areas while maintaining visual coherence.

When capturing frontal costume view showing chest emblem fabric texture, the system synthesizes matching fabric patterns applied to cape posterior surface by drawing from learned textile distributions representing weave patterns matched at similar spatial scales, ensuring visual consistency.

The discriminator network ensures spatial frequency consistency:

- Synthetic textures must maintain scale-appropriate detail where stitching patterns appear at appropriate spatial scales

- Weathering effects must follow physically plausible distributions

- Color gradients must transition smoothly without visible seam artifacts

Through quality control enforced by discriminator evaluation. You obtain a 3D model where model textures wrap continuously around geometric surfaces lacking repetitive tiling artifacts common in manual UV-mapped (process of projecting 2D texture coordinates onto 3D model surfaces in computer graphics) models, because the AI system generates unique texture content created for each surface region rather than reusing pre-made texture tiles, making procedural synthesis superior to tile-based texturing.

| Material Category | Surface Type | BRDF Model |

|---|---|---|

| Matte polyester | Cape fabric | Diffuse reflection |

| Semi-gloss rubber | Cowl material | Mixed diffuse/specular |

| Metallic | Armor plating | Specular reflection |

| Leather | Glove surfaces | Subsurface scattering |

| Fabric | Bodysuit regions | Diffuse with texture |

| Reflective | Utility belt components | High specular |

| Transparent | Visor materials | Transmission/refraction |

Threedium's material analysis component classifies seven material categories, then assigns category-specific BRDF models customized for each material region, enabling physically-accurate rendering and material classification. You gain advantage from this material classification system because the 3D character exhibits physically accurate light interactions specific to each costume component rather than applying uniform shading across all surfaces, with material-specific rendering superior to uniform shading and classification enabling per-material optical accuracy.

View-Dependent Rendering Effects

View-dependent effects represent visual properties of a surface that change based on viewing angle, and our NeRF-GAN framework captures these effects by modeling the directional component of light reflection within the neural radiance field representation. You see:

- Specular highlights that move across armor plates as viewing angle changes

- Fabric sheen that intensifies at grazing angles along cape edges

- Fresnel reflections that strengthen at the periphery of curved surfaces

The NeRF component encodes these view-dependent phenomena by including viewing direction as an input parameter to the neural network alongside spatial position, allowing the model to predict different color values for the same 3D coordinate when observed from different angles.

Rotate your generated 3D character in the viewport above, and the bat symbol's metallic finish exhibits realistic highlight patterns that shift position and intensity based on the virtual camera's location relative to the chest plate's surface normal.

This view-dependent modeling captures:

- The shimmer on a cape's satin lining

- The anisotropic reflections on brushed metal armor components

- The subsurface scattering that creates a subtle glow around the edges of thick rubber cowl material when backlit

The system learns these optical behaviors from training photographs that show Batman costumes under varied lighting conditions and viewing angles, extracting the mathematical relationships between surface orientation, light direction, and observer position that govern realistic material appearance. You benefit from this sophisticated light transport modeling because your 3D character exhibits the same visual richness as professionally photographed costumes, with highlights, shadows, and reflections that respond dynamically to lighting changes in real-time rendering engines rather than remaining static as they would in simple texture-mapped models.

Our view-dependent shader implementation samples the NeRF's learned directional radiance function at 8 to 16 discrete viewing angles during generation, then encodes this directional information into spherical harmonic coefficients that real-time renderers can evaluate efficiently. You export your Batman character to game engines or AR applications, and the model maintains view-dependent visual effects without requiring the computational overhead of full neural network evaluation at runtime.

Optimized Mesh Topology

Mesh topology defines the underlying structure of a 3D model through the arrangement of vertices, edges, and polygons that form its surface, and our generation pipeline produces clean topology optimized for animation and rendering rather than the irregular, artifact-laden meshes that photogrammetry typically creates. You receive a character model with edge loops that follow anatomical contours:

- Circular loops around joints to facilitate bending

- Longitudinal loops along limbs to support stretching

- Radial loops around the face to enable expression deformation

The system achieves this topological organization by training the neural network to output structured mesh connectivity patterns rather than unstructured point clouds, learning from professionally modeled character templates how vertices should distribute across curved surfaces to minimize distortion during animation.

The hybrid architecture generates quad-dominant polygon layouts where four-sided faces predominate over triangles, providing the geometric flexibility that character animators require for deformation without introducing shading artifacts.

Animate your Batman character through action poses, and the mesh bends smoothly at:

- Shoulder joints

- Elbow hinges

- Knee articulations

Because the underlying topology contains sufficient edge resolution in high-deformation areas while maintaining efficient polygon counts in rigid regions like armor plates. The GAN component ensures polygon flow follows the directional grain of costume elements:

- Edge loops circle around the cowl's eye openings

- Follow the radiating folds of cape fabric from shoulder attachment points

- Trace the segmented panels of abdominal armor

Because the discriminator network rejects mesh configurations that violate the structural logic of superhero costume construction.

| Region | Polygon Allocation | Purpose |

|---|---|---|

| Head and cowl | 40% (6,000-10,000 faces) | Facial detail |

| Torso | 30% (4,500-7,500 faces) | Costume emblem clarity |

| Limbs and cape | 30% (4,500-7,500 faces) | Animation flexibility |

| Total | 15,000-25,000 faces | Full-body character |

Our topology optimization algorithm maintains polygon counts between 15,000 and 25,000 faces for full-body Batman characters. You benefit from this strategic polygon distribution because the model contains sufficient geometric resolution for close-up renders while remaining efficient enough for real-time game engine performance at 60 frames per second.

Automated Multi-Scale Detail Generation

You achieve detail levels in minutes that manual 3D modeling would require days to sculpt because our AI automatically generates micro-surface details like fabric weaves, armor scratches, and costume stitching through learned procedural synthesis. The GAN component applies detail layers at multiple scales:

- Macro-scale features like cape folds and armor panel separations

- Meso-scale elements like fabric texture and leather grain

- Micro-scale details like thread patterns and surface scratches

The system learns appropriate detail density for each costume component from training data, applying:

- Fine stitching patterns to fabric regions

- Impact dents to metal armor

- Subtle wrinkles to flexible joint areas

Upload a simple photograph, and the output includes these professionally crafted details without requiring you to manually sculpt, paint, or apply displacement maps, because the neural network has internalized the visual complexity that makes superhero costumes appear production-ready rather than simplified.

This automated enhancement operates through hierarchical detail synthesis, where the NeRF component establishes large-scale geometric forms and the GAN component progressively adds finer details through multiple generation passes. You see this hierarchy in the final model:

- The overall silhouette matches your body proportions from the input photo

- The mid-level costume shapes follow Batman's design language

- The fine details exhibit the hand-crafted quality of professional costume fabrication

The discriminator network evaluates detail authenticity at each scale, ensuring that:

- Fabric wrinkles follow physically plausible stress patterns

- Armor weathering concentrates at edges and corners where wear naturally occurs

- Stitching follows the structural seams where costume pieces would realistically join

| Detail Level | Resolution | Coverage | Purpose |

|---|---|---|---|

| Large costume panels | 512×512 pixels | Macro-scale | Overall surface patterns |

| Medium elements | 1024×1024 pixels | Meso-scale | Gloves and boots detail |

| Hero details | 2048×2048 pixels | Micro-scale | Chest emblem and cowl |

Our multi-scale detail generation system applies normal map displacements at three resolution levels. You receive a character model where surface detail remains crisp and readable at any viewing distance, from full-body shots to extreme close-ups of individual costume components.