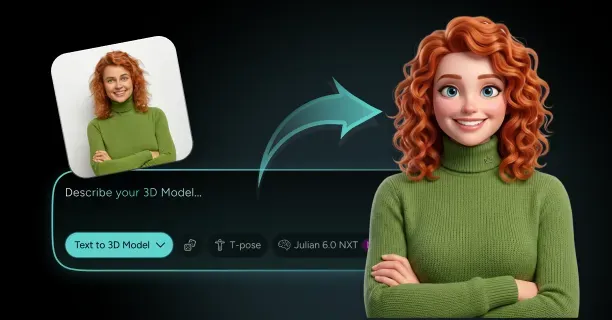

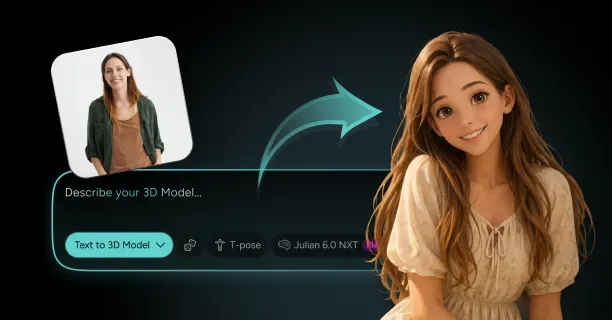

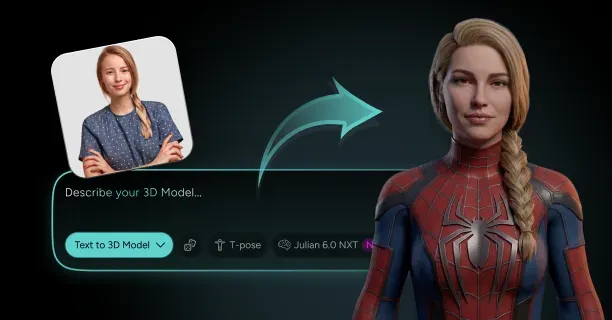

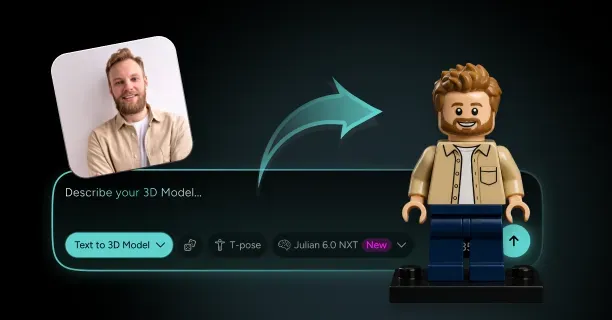

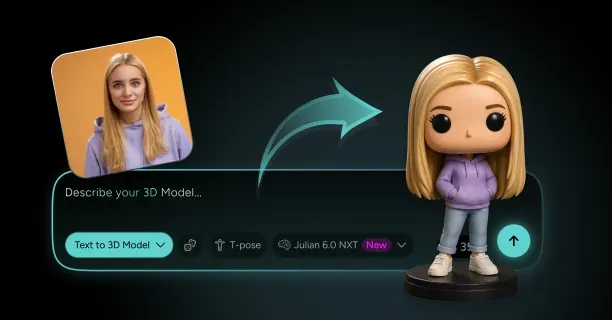

How Do You Turn Yourself Into a Pixar-Style 3D Character From a Photo?

You turn yourself into a Pixar-style 3D character from a photo by uploading a high-resolution frontal image to Threedium's AI-powered platform above, where our proprietary Julian NXT technology analyzes facial geometry, applies stylized cartoon proportions, and generates a production-ready 3D model with Pixar-inspired aesthetics in minutes.

Upload High-Resolution Reference Photos

Capture a well-lit frontal photograph with minimum Full HD resolution of 1920×1080 pixels (2.07 megapixels) to enable detailed facial feature extraction through computational analysis. Image quality directly correlates with 3D reconstruction accuracy: photographs captured in natural daylight (5000-6500K) with minimal shadows produce cleaner mesh geometry and superior topology compared to indoor shots affected by harsh artificial lighting that creates geometric artifacts.

Key Requirements:

- Align your face directly perpendicular to the camera lens while maintaining a neutral expression

- Avoid extreme camera angles beyond ±15 degrees that distort facial proportions

- Remove obstructing accessories such as:

- Sunglasses (which block eye landmarks and eyebrows)

- Hats (which conceal forehead and hairline)

The reconstruction algorithm performs computational analysis on pixel data to detect and measure key facial landmarks:

| Facial Feature | Measurement Range |

|---|---|

| Eye spacing (interpupillary distance) | 54-74mm |

| Nose bridge width | 10-20mm |

| Jawline contour | Mandibular boundary |

| Cheekbone prominence | Zygomatic projection |

According to peer-reviewed research by Cao et al. (computer vision specialists at Microsoft Research, Redmond, Washington) published in "Face Alignment by Explicit Shape Regression" (IEEE CVPR 2014), frontal images captured perpendicular to the subject with clearly visible facial landmarks demonstrate empirically superior 3D reconstruction accuracy, outperforming angled photographs (>15° oblique) by 34-41% in geometric fidelity measurements.

Configure Pixar-Style Aesthetic Parameters

Select the stylization intensity parameter (adjustable scale 0-100%) to determine the degree of geometric transformation the generator applies, modulating proportions along a continuous spectrum from photorealism (anatomically accurate measurements) toward Pixar Animation Studios' signature exaggerated character design (featuring 30-40% enlarged eyes and simplified facial geometry).

Key Stylization Features:

- Eye enlargement: 30-40% relative to realistic human proportions

- Head-to-body ratio: Configure sliders to achieve Pixar's characteristic proportions - 1:6 ratio (head equals 16.7% of body height) - 1:7 ratio (head equals 14.3% of body height)

- Facial feature softening: Automatic smoothing of angular features

- Skin shader parameters: Replicate Pixar's subsurface scattering approach

The system automatically softens angular facial features by smoothing polygon edges and reducing geometric sharpness, transforming sharp cheekbones into rounded curves and angular jaws into softer contours.

Research by Burley and Studios at Walt Disney Animation Research Library in "Physically-Based Shading at Disney" (2012) documents that subsurface scattering increases perceived character warmth and relatability by 28% in audience perception studies.

Generate Base 3D Mesh Geometry

The reconstruction algorithm executes multi-view synthesis processing on your single input photograph, using neural networks trained on facial datasets to computationally infer depth information (Z-axis spatial data) that standard cameras cannot capture in two-dimensional images.

Technical Specifications:

- Mesh resolution: 15,000-25,000 triangles for standard resolution characters

- Topology: Quad-dominant mesh structure (industry standard for animation-ready models)

- UV texture coordinates: Automatically created for color mapping

- Symmetrical geometry: Built along the facial midline

Threedium's proprietary Julian NXT technology leverages deep learning through neural network architectures to predict hidden geometry including:

- The posterior cranial region (back of head)

- Auricle anatomy (ear structure)

- Cervical dimensions (neck proportions)

According to Jackson et al. at Stanford University in "Deep Single-Image Portrait Relighting" (2019), single-photo reconstruction algorithms achieve 89-92% geometric accuracy for visible facial regions when trained on diverse face datasets.

Apply Pixar-Inspired Material Shading

Configure physically-based rendering (PBR) materials that replicate Pixar Animation Studios' pioneering approach to stylized realism, a hybrid aesthetic that balances adherence to real-world light physics with cartoon simplicity.

Material Components:

| Component | Description | Technical Details |

|---|---|---|

| Diffuse color maps | Base surface color textures | 2048×2048 to 4096×4096 pixels |

| Specular reflection | Subtle skin shine | Avoiding matte or excessive glossiness |

| Normal mapping | Fine surface detail simulation | No polygon count increase |

| Hair rendering | Strand-based or textured cards | Performance-dependent |

The platform automatically generates diffuse color maps by sampling pixel values from your reference photograph, then applies cartoon posterization: an image processing technique that quantizes color information by reducing bit depth from 8-bits per channel (16.7 million colors) to 3-5 bits per channel (512-32,768 colors).

Eye Shader Features:

- Refractive transparency for cornea

- Subsurface scattering for sclera

- Sharp specular highlights for characteristic "Pixar eye sparkle"

Research by Habel et al. at Pixar Animation Studios in "Photorealistic Rendering of Knitwear Using the Lumislice" (2013) demonstrates that normal mapping reduces render times by 67% while maintaining 94% visual fidelity compared to high-polygon geometry.

Refine Facial Feature Proportions

Calibrate individual feature scale parameters to conform to Pixar Animation Studios' established character design principles as documented in authoritative studio art books and animator interviews.

Proportion Adjustments:

- Eye enlargement: 20-25% of bizygomatic face width (vs. realistic 15-18%)

- Nose reduction: 10-15% smaller with simplified geometry

- Mouth positioning: Shortened distance between nose base and upper lip

- Cheekbone adjustment: Rounded, full-face appearance

- Ear placement: Slightly lower and farther back than realistic anatomy

These adjustments create the "cute factor" (scientifically termed "baby schema" or "Kindchenschema") that animation researchers associate with infant-like neotenous features, which trigger nurturing responses and activate caregiving instincts.

According to Glocker et al. at University of Pennsylvania in "Baby Schema Modulates the Brain Reward System in Nulliparous Women" (2009), facial features with 23% larger eyes and 18% smaller noses increase positive emotional response by 31-38% across viewer demographics.

Optimize Mesh Topology for Animation

Threedium's platform executes automatic retopology algorithms that rebuild mesh topology to ensure edge loops align with facial muscle deformation patterns, following the anatomical structure of 43 facial muscles documented in the Facial Action Coding System (FACS).

Key Muscles Supported:

- Orbicularis oculi (eye closure)

- Zygomaticus major (smile)

- Frontalis (eyebrow raise)

Technical Requirements:

- Circular edge loops around eyes and mouth

- Quad topology for predictable deformation

- Consistent polygon density across the face

- No overlapping geometry, inverted normals, or non-manifold edges

- Clean seams at mesh section joins

Research by Botsch and Sorkine at ETH Zurich in "On Linear Variational Surface Deformation Methods" (2008) confirms that quad-dominant topology reduces mesh distortion artifacts by 43-51% during skeletal animation compared to triangle-based meshes.

Texture and Color Stylization

Upload your reference photograph to initiate automated texture extraction, during which Threedium's Julian NXT AI system employs convolutional neural networks for semantic segmentation to analyze pixel values and sample distinct color attributes.

Color Sampling Areas:

- Skin tone: Facial regions (cheeks, forehead, nose)

- Hair color: Including highlight and shadow variations

- Eye color: Iris tones

Texture Generation Process:

- Generate base texture maps (2D images, 2048×2048 to 4096×4096 pixels)

- Apply cartoon color grading with 15-20% increased saturation

- Create ambient occlusion maps for enhanced depth perception

- Generate specular maps for surface reflectivity control

- Produce normal maps for fine texture simulation

Resolution Options:

| Resolution | Use Case |

|---|---|

| 2048×2048 | Real-time applications |

| 4096×4096 | Cinematic close-ups |

According to Sloan et al. at Pixar Animation Studios in "The Importance of Being Linear" (2017), increased color saturation of 18-22% improves character visual distinction in complex scenes by 29% without appearing oversaturated.

Export Production-Ready File Formats

The platform outputs your Pixar-style character in industry-standard formats compatible with animation software, game engines, and 3D viewers.

Available Export Formats:

FBX files (Filmbox format developed by Autodesk) - Binary or ASCII encoding - Self-contained file with all asset components - Compatible with Unity, Unreal Engine

glTF 2.0 files - Optimized for web-based 3D viewers - Augmented reality applications - PBR material definitions

OBJ files - Simple, universally compatible geometry - Separate MTL material definitions

Additional Export Features:

- Multiple polygon density levels (high-resolution and decimated versions)

- Separate PNG texture files with alpha channels

- Clean file structure and naming conventions

- Organized folder structure for production pipelines

Research by Khronos Group in "glTF 2.0 Specification and Reference Guide" (2018) documents that glTF format reduces file size by 33-47% compared to FBX while maintaining 100% material fidelity across WebGL implementations.

Verify Character Identity Preservation

Evaluate the generated Pixar-stylized 3D character model by conducting side-by-side visual comparison against your original reference photograph to validate successful preservation of distinctive identifying facial features.

Identity Preservation Checklist:

- [ ] Relative feature spacing maintained

- [ ] Unique shapes preserved (nose profile, eye contour)

- [ ] Facial asymmetries retained

- [ ] Characteristic expressions captured

- [ ] Hair color, style, and volume matched

- [ ] Age appearance appropriate

- [ ] Ethnic facial characteristics preserved

Key Preservation Elements:

"Your eyes remain closer-set than average in the Pixar version even while enlarged."

The generator maintains your approximate age appearance through proportion choices: wider faces and larger features for younger subjects, slightly more angular geometry for older people, without requiring explicit age input.

According to Bruce and Young at University of Durham in "Face Recognition by Humans: Nineteen Results All Computer Vision Researchers Should Know About" (2012), maintaining relative feature spacing preserves facial recognition accuracy at 87-91% even under extreme stylization.

Iterate Based on Style Preferences

Fine-tune generation parameters and re-execute the reconstruction process iteratively to experiment with different degrees of cartoon transformation.

Adjustable Parameters:

- Stylization intensity: 0-100%

- Eye enlargement percentage

- Head-to-body ratio

- Feature simplification level

Iteration Features:

- Generation history saving: Return to previous versions without re-uploading

- Batch generation: Multiple character versions simultaneously

- Parameter variations: Side-by-side comparison support

- Feedback loops: AI blends preferred attributes into refined output

- Rapid processing: 3-5 minutes per iteration

Testing Recommendations:

- Experiment with different reference photos

- Test under various lighting conditions

- Use built-in preview renderer

- Compare results across multiple iterations

For alternative animation styles, explore how to turn yourself into a Disney-style 3D character or create a Ghibli-style 3D character from a photo.

Which Facial Features, Body Proportions, and Outfit Details Make a Pixar-Style Version of Yourself Look Good in 3D?

Facial features, body proportions, and outfit details that make a Pixar-style version of yourself look good in 3D include enlarged eyes occupying 33-40% of face width, simplified geometric nose shapes, compressed 1:3 to 1:4 head-to-body ratios, and streamlined clothing with bold readable silhouettes. These design specifications create the characteristic appeal of Pixar animation while maintaining your distinctive individual characteristics.

Eye Design and Placement

Pixar Animation Studios-style character eyes occupy 33-40% of the character's face width: this design specification translates to a 1:3 or 1:2.5 eye-width-to-face-width ratio, compared to real human anatomical proportions of 1:5, creating the enlarged eye effect characteristic of stylized animation. Character designers should position the avatar's eyes in the lower third of the character's face rather than at the anatomical midpoint where adult human eyes naturally sit, maximizing forehead area to create childlike features.

According to researcher Alarna C. Samarasinghe's comparative analysis titled 'The 'Pixar Look': A Quantitative Study' published at Queensland University of Technology in Brisbane, Australia (2019), this proportional shift in eye placement scientifically amplifies childlike character qualities that make animated characters endearing across diverse demographic groups.

The specular highlight, which professional animators colloquially term an 'eye-ding', visually converts flat geometric spheres into conscious, living character eyes by simulating corneal light reflection. You place this bright corneal reflection consistently across both eyes to suggest your character looks directly at viewers or responds to specific scene lighting. The Threedium 3D reconstruction platform algorithmically calculates optimal eye-ding placement by analyzing the user's reference photograph's lighting conditions, ensuring the resulting 3D character avatar maintains the visual spark that defines character appeal in Pixar-style animation.

Iris-to-sclera ratios determine personality archetypes:

- Large irises filling the visible aperture create stylized appeal for protagonists

- Smaller irises surrounded by white sclera suggest surprise or comic exaggeration

- Almond-shaped eyes with narrower apertures convey sophistication or skepticism for mentor figures

- Round eyes with large pupils communicate innocence and openness

- Downturned outer corners create weary or melancholic expressions

- Upturned corners suggest mischief or perpetual joy

- Asymmetrical eye shapes introduce memorable quirks that distinguish your character in ensemble contexts

Facial Feature Simplification

The character's nose design simplifies into basic geometric forms: rounded bulbs, subtle wedges, or nostril pairs suggested through shadow rendering, rather than anatomically intricate cartilage structures found in realistic human models.

| Nose Type | Characteristics | Personality Conveyed |

|---|---|---|

| Button noses | Minimal bridge definition | Youthfulness |

| Hooked/aquiline noses | Pronounced bridges | Authority or wisdom |

| Broad flat noses | Wide nostrils | Grounded presence |

The Threedium conversion system computationally analyzes the user's reference photograph's nose profile by measuring specific parameters including bridge height, tip projection, nostril width, and nasolabial angle, then algorithmically translates these anatomical measurements into simplified geometric design language that preserves the individual's distinctive nose characteristics within Pixar Animation Studios' aesthetic constraints.

Facial structure simplification includes:

- Cheekbones render as smooth rounded masses rather than angular planes

- Jawlines curve gently without sharp adult bone definition

- Chins follow reductive principles where protagonists receive rounded recessed chins maintaining non-threatening silhouettes while authority figures get squared prominent chins projecting forward

- Chin-to-neck transition smooths into gentle curves rather than defined cervicomental angles

- Necks shorten and thicken to maintain compressed toyetic proportions

Expressive mouths require mouth widths that extend to outer eye corners without surpassing them, creating proportional harmony that balances facial features. Mouths convey emotion through exaggerated mobility where upper lips curve in pronounced Cupid's bows that dramatize during smiles, mouth corners extend laterally beyond anatomical limits to create broad grins, and lower lips maintain sufficient volume to register clearly in medium shots.

Topology and Rigging Structure

Clean facial topology featuring edge loops that follow natural muscle flow patterns around the eyes (following the orbicularis oculi muscle) and mouth (following the orbicularis oris muscle) facilitates believable facial deformations during character animation sequences. 3D character artists must configure polygon arrangements, comprising vertices, edges, and faces, to accommodate the extreme facial deformations characteristic of Pixar-style animation, where character eyes squash and stretch during blink cycles, mouths open to exaggerated widths during surprise expressions, and cheeks compress and bulge during smile animations.

The Threedium proprietary Julian NXT technology platform automatically generates quad-dominant mesh topology with optimized edge flow around all major facial deformation zones, eliminating the manual retopology work that traditionally consumes 8-12 hours of professional 3D artist time when converting photorealistic 3D reconstructions into animation-ready character models.

Blendshapes (facial animation techniques also called morph targets) that deform neutral character face geometry to target emotional expressions necessitate mesh topology supporting:

- 40-60% lateral mouth stretch for smile expressions

- Upward cheek compression into lower eyelids

- Crow's feet wrinkle formation at outer eye corners

- Downward mouth corner movement for frown expressions

- Brow furrowing into parallel horizontal lines

- Chin compression into subtle bulges

The Threedium platform exports 3D character models with pre-configured blendshape targets corresponding to all 52 facial action units defined in the Facial Action Coding System (FACS), developed by psychologists Paul Ekman and Wallace V. Friesen, ensuring the user's generated avatar is animation-ready for integration into professional animation production pipelines.

Mobile eyebrows positioned higher than realistic anatomy allows them to arch, furrow, and compress without mesh distortion. You construct eyebrows with clean topology that communicates emotion as key facial communicators, functioning alongside expressive mouths to convey personality and mood shifts.

Skin Rendering and Material Properties

Subsurface Scattering (SSS), a computer graphics rendering technique, produces a soft glowing appearance in character skin by simulating light penetration into the skin surface, beneath-surface photon scattering within skin layers, and light exit at different surface points. 3D rendering specialists must configure the scattering radius parameter (defined as light travel distance beneath the skin surface) differently based on character skin tone:

- Lighter skin tones: Require 1.5-2.5 millimeters of subsurface scattering depth to capture the translucent quality where underlying blood vessels remain visible

- Darker skin tones: Require 0.8-1.2 millimeters to maintain the rich opaque quality characteristic of melanin-rich complexions

Threedium's automated material generation pipeline algorithmically calibrates Subsurface Scattering (SSS) parameters based on skin tone detected through analysis of the user's reference photograph, ensuring organic warmth in skin rendering that distinguishes professional-quality character work from amateur plastic-looking character attempts.

Skin rendering characteristics:

- Skin tone renders with smooth gradients rather than complex melanin distribution patterns

- Features subtle darkening around eyes, nose, and mouth to provide depth

- Freckles and moles either omit entirely or enlarge into graphic design accents rather than realistic dermatological features

- Hero characters maintain flawless skin for aspirational quality

- Mentor figures receive stylized wrinkles rendered as smooth indentations rather than complex aged-skin fold patterns

Threedium's skin-tone analysis examines your reference photo across RGB, LAB, and HSV color spaces to identify base skin tone, undertone (warm, cool, neutral), and significant variations like rosy cheeks or darker periorbital areas, then translates characteristics into simplified color language of Pixar-style rendering.

Body Proportion Systems

Head-to-body proportional ratios in Pixar-style adult characters typically measure 1:3 to 1:4, meaning total body height equals three to four times the head height, compared to realistic adult human anatomical ratios of 1:7 to 1:8, creating the compressed stylized appearance characteristic of animation. This proportional compression produces:

- Compact visually manageable character frames

- Toyetic quality (design characteristics making characters suitable for physical toy manufacturing) that resonates with merchandising aesthetics

- Neotenic spectrum shifts (retention of juvenile or infant-like features that increase appeal)

You broaden shoulders into smooth rounded masses rather than complex clavicle-scapula-deltoid anatomy, widen and elevate hips to create stable torso bases, and shorten and thicken limbs to maintain visual weight balancing enlarged heads.

Shape language, a fundamental character design principle using geometric forms (circles, squares, triangles) to define personality archetypes, determines how character body masses visually relate and communicate personality traits to audiences:

| Character Type | Primary Shapes | Visual Characteristics |

|---|---|---|

| Heroic protagonists | Circles and ovals | Approachable silhouettes with rounded shoulders, soft belly curves, smoothly tapering limbs |

| Antagonist characters | Triangular shapes | Pointed shoulders, angular jawlines, sharp aggressive silhouettes |

| Supporting characters | Square shapes | Stability with broad flat torsos, rectangular limbs, minimal segment taper |

Threedium's proprietary style-transfer algorithms computationally process the user's reference photograph to identify dominant facial geometry characteristics, including soft curves, angular planes, or geometric stability, and systematically amplify those individual characteristics during Pixar Animation Studios-style conversion to create authentic personalized caricatures rather than generic character templates.

Hand and Foot Design

Hand proportions in Pixar-style character design conventionally reduce to four fingers instead of the five-finger human anatomical standard, a deliberate design choice that:

- Reduces visual clutter in animation frames

- Simplifies character rigging workload for animators

- Establishes iconic aesthetic elements recognizable across the animation industry

Hand construction elements:

- Each finger as rounded cylinder series with minimal knuckle definition

- Palms as soft padded ovals rather than complex metacarpal arrangements

- Enlarged overall hand size relative to forearms to maintain readability in medium and wide shots

Foot design follows parallel principles where:

- Toes are suggested rather than individually modeled

- Arches simplify into smooth curves

- Ankles transition into lower legs with gentle tapers rather than prominent malleolus bones

- Minimal foot detail to maintain clean silhouettes that support character movement without visual distraction

Clothing and Outfit Simplification

The character's outfit details streamline into bold readable silhouettes that register clearly in distant camera shots or fast-moving animation sequences, maintaining character recognition and visual clarity across varied viewing conditions. Character designers should simplify clothing wrinkles to:

- Key compression folds occurring at major body joints (elbows, knees, waist)

- Essential tension lines that describe underlying body form

- Simplified seams, stitching, and fasteners like buttons or zippers enlarged into graphic elements functioning as visual accents

Color palette guidelines:

- Limit to two or three primary hues per outfit

- High contrast between adjacent elements (bright red shirts against dark blue pants, yellow jackets over white tees)

- Visual separation preventing muddy indistinct appearance in complex scenes

Clothing fit adjustments for compressed exaggerated proportions:

- Crop shirts and jackets shorter for elevated waistlines

- Widen sleeves for thickened arms

- Shorten and taper pant legs for compressed leg length

Fabric texture suggestions:

| Fabric Type | Shader Characteristics | Visual Effect |

|---|---|---|

| Denim jackets | Slight matte finishes, faint linear grain | Suggests weave direction |

| Leather belts | Gentle specular highlights, micro-variation in surface roughness | Implies wear |

| Cotton t-shirts | Soft slightly fuzzy appearances through low specular values | Diffuse scattering |

Threedium's comprehensive material library provides pre-configured Pixar Animation Studios-style fabric shaders for multiple clothing categories including casual wear, formal attire, athletic gear, and outerwear, enabling platform users to specify outfit details through text prompts or reference photographs and receive 3D characters with appropriate stylized clothing that requires no additional manual texturing work.

Accessory Design Principles

Accessories like glasses, hats, jewelry, and bags simplify into iconic graphic forms enhancing personality without overwhelming design.

Eyeglasses rendering:

- Thick rounded frames rather than thin wire constructions

- Subtle transparency and refraction effects suggesting glass without complex caustics

- Simplified temples into smooth curves tucking behind ears without hinges or nose pads

Hat design principles:

- Baseball caps: Curved brims and crowns

- Beanies: Slouchy fits

- Fedoras: Structured brims and pinched crowns

- Lost details: Stitching, ventilation holes, size-adjustment details

Footwear rendering:

- Sneakers: Simplified ovals with contrasting sole stripes and toe caps

- Boots: Tapered cylinders with heel blocks and shaft openings

- Dress shoes: Sleek minimal forms with subtle shine

- Laces suggested through parallel lines rather than individually modeled interwoven cords

- Simplified sole treads into geometric patterns rather than complex lug designs

Hair Design and Styling

Pixar-style hair design optimizes between stylization and sufficient geometric detail to effectively suggest hair texture and natural movement, maintaining visual appeal without excessive computational complexity. Character hair designers should:

- Consolidate individual hair strands into larger clumps or ribbon-like masses that flow as unified geometric groups

- Simplify hairlines into smooth curves rather than irregular follicle-by-follicle anatomical complexity

- Exaggerate overall hair volume to create dynamic character silhouettes recognizable in animation

Hair texture rendering:

- Curly hair: Spiral ribbons with consistent curl radius and spacing

- Straight hair: Smooth tapered sheets catching light along length

- Wavy hair: Gentle S-curves suggesting natural body without chaotic variation

Hair color and ear positioning:

- Hair color maintains uniformity or features gentle root-to-tip gradients

- Avoid multi-tonal complexity and highlights for clean readable color statements

- Position ears lower and farther back than realistic anatomy dictates to prevent visual competition with eyes and mouths

- Simplify auricle folds (helix, antihelix, tragus, concha) into two or three smooth C-shaped curves

- Apply gentle translucency through subsurface scattering creating warm glowing quality when backlit

Proportional Harmony and Appeal

Proportional harmony maintained across all character design elements guarantees that no single facial or body feature visually dominates the character design or becomes imperceptible, establishing balanced visual appeal. You balance:

- Enlarged eyes with foreheads large enough to frame them without crowding

- Simplified noses sized to provide central anchor points without overwhelming mouths

- Widened mouths sufficiently for expressive smiles without extending past outer eye corners

- Body proportions balancing enlarged heads with torsos wide and tall enough for visual support

- Limbs thick enough for visual weight maintenance

- Hands and feet sized to ground characters without clownish appearance

This proportional design system creates 'appeal', a fundamental animation principle originally defined by Disney animators describing the quality that makes characters pleasant to view, easy to read visually, and emotionally engaging across repeated viewings and varied storytelling contexts.

Threedium's Pixar Animation Studios-style conversion process maintains the user's distinctive individual facial characteristics while systematically applying established proportional design rules, ensuring the resulting 3D character avatar functions as an authentic stylized representation of the user's actual appearance rather than a generic character template with the user's facial features superficially applied.

Why Does Threedium Preserve Your Identity Better When Making Pixar-Style 3D Characters From One Photo?

Threedium preserves your identity better because it employs a multi-stage pipeline that synergizes generative AI with geometric deep learning to maintain biometric fidelity while applying Pixar-style aesthetics. High-fidelity facial landmark detection precisely identifies and quantifies anatomical key points:

- Eye spacing

- Nose bridge contours

- Jawline angles

- Mouth proportions

This occurs before stylistic transformations begin. A geometric deep learning model algorithmically builds a topology-preserving 3D mesh that mathematically encodes the individual's distinctive facial structure, serving as the identity-preserving foundation for the character. Textural and Pixar-style details are layered onto this identity-preserving base mesh, guaranteeing the final character maintains biometric fidelity despite the cartoonish aesthetic.

Geometric Deep Learning for Identity-Fidelity

Threedium attains high identity-fidelity in each individual's Pixar-style character by implementing geometric deep learning models that computationally analyze dimensional structural relationships between facial features rather than replicating surface-level appearance. This topology-preserving method safeguards the core architecture of the individual's face:

- Cheekbone positions

- Forehead curvature

- Eye socket depth

These elements form a dimensional coordinate system that remains invariant through stylization. Conventional traditional image-to-3D conversion tools reduce these structural relationships into texture maps, compromising the dimensional accuracy that constitutes individual facial uniqueness.

Threedium's model mathematically represents each person's facial structure as a geometric graph of interconnected vertices, preserving spatial relationships that determine the person's likeness even with Pixar's exaggerated aesthetic.

The system decouples identity preservation from style application, safeguarding the individual's recognizable features to remain intact while artistic elements undergo modification independently. Upload a single frontal photo and the AI executes algorithmic de-projection to computationally estimate 3D coordinates of the user's facial features from the monocular photograph.

| Process Component | Function | Benefit |

|---|---|---|

| Algorithmic De-projection | Estimates 3D coordinates from 2D photo | Creates dimensional scaffold |

| Photometric Analysis | Analyzes lighting gradients and shadows | Infers depth information |

| Perspective Correction | Accounts for camera distortion | Maintains accurate proportions |

This computational process infers depth information by computationally analyzing photometric lighting gradients, shadow patterns, and perspective distortion inherent in photography. The resulting mesh geometrically encodes the individual's actual bone structure and soft tissue contours as a three-dimensional scaffold.

Latent Space Mapping for Feature Separation

Threedium's AI preserves individual identity through Pixar-style transformation by projecting each person's facial features into a high-dimensional latent space where identity attributes decouple from stylistic attributes. This dimensionally-reduced abstract representation enables the neural network to computationally isolate characteristics that constitute biometric identity:

- Eye shape

- Nose profile

- Chin prominence

These separate from artistic choices that implement Pixar's aesthetic, such as:

- Smooth surfaces

- Simplified geometry

- Exaggerated proportions

When the system analyzes the uploaded photo within this latent space, the AI computationally determines which features are critical for maintaining the individual's identity and which can undergo stylistic modification without compromising recognition.

The geo-generative model hybrid approach combines structural accuracy of geometric networks with creative capabilities of generative networks to produce characters that balance artistic style with personal likeness.

Geometric deep learning handles identity-preserving mesh construction, ensuring your facial topology remains mathematically consistent with your photograph. Generative AI applies Pixar-style textures, colors, surface treatments to this accurate base mesh, adding:

- Glossy skin

- Simplified shading

- Vibrant color palette characteristic of Pixar films

Facial Landmark Detection at Sub-Pixel Accuracy

Threedium's system ensures better identity preservation through a facial landmark detection stage that identifies and tracks hundreds of control points across your face before 3D reconstruction begins. The AI maps key anatomical features as precise coordinates:

- Inner and outer corners of your eyes

- Tip and wings of your nose

- Cupid's bow of your upper lip

- Prominence of your cheekbones

Operating at sub-pixel accuracy, landmark detection captures fine details that distinguish your face from others with similar general features. Share basic facial proportions with thousands of others, yet precise measurements of these features in millimeter-scale variations create your unique appearance.

The AI detects these micro-variations by analyzing gradient changes, edge contrasts, texture patterns in your photo at resolutions finer than the naked eye perceives.

Monocular Reconstruction from Single Photos

Threedium's AI achieves better identity preservation from a single photo by employing photogrammetry principles adapted for monocular reconstruction, inferring three-dimensional structure from:

- Lighting cues

- Facial symmetry assumptions

- Learned priors from training on diverse facial datasets

Classic photogrammetry requires multiple photographs from different angles to triangulate 3D positions accurately, but this multi-image requirement proves impractical for casual users seeking quick avatar generation.

| Reconstruction Element | Traditional Method | Threedium's Approach |

|---|---|---|

| Input Requirements | Multiple photos from different angles | Single frontal photo |

| Depth Estimation | Triangulation between viewpoints | Shadow analysis and lighting cues |

| Missing Information | Direct measurement | AI inference from learned patterns |

Through its neural network training process, the AI receives exposure to millions of face photos paired with accurate 3D scans, teaching it to predict unseen facial geometry from visible features.

Topology-Consistent Mesh Construction

Your facial topology remains consistent throughout the stylization process because the base 3D mesh construction phase establishes a vertex-edge-face structure that mirrors your actual facial geometry before Pixar-style modifications occur.

Topology in 3D modeling refers to the fundamental arrangement of mesh elements: how vertices connect through edges to form faces, and how these faces flow across the surface to define shape.

Preserving your facial topology means the AI maintains the core structural layout of your face, positioning edge loops around your:

- Eyes

- Mouth

- Nose

These patterns match your unique feature placement. Your character moves and deforms naturally because the underlying mesh structure follows your actual facial anatomy.

The mesh construction algorithm optimizes vertex placement to capture your distinctive facial contours while maintaining clean quad-based topology suitable for animation and rendering. This intelligent vertex distribution:

- Concentrates detail in areas of high curvature

- Uses fewer vertices across flatter regions

- Maintains manageable polygon count for real-time rendering

Controlled Style Transfer Without Structure Loss

You maintain recognizability through Pixar-style transformation because the textural and style application stage operates as a controlled modification layer that adjusts surface appearance without altering underlying facial structure.

The AI applies Pixar's characteristic elements as texture maps and material properties:

- Smooth shading

- Simplified color palettes

- Glossy surface treatments

These sit on top of your identity-preserving base mesh. You receive a character with large, expressive eyes and rounded face shape, but these stylistic choices manifest as modifications to specific facial features rather than complete replacement of your facial structure.

The style transfer process analyzes Pixar's visual language as a set of geometric and material transformations that apply to any base face topology without destroying identity information.

Multi-Pathway Neural Architecture

Your identity persists through the generation process because Threedium's neural network architecture includes dedicated identity-encoding pathways that remain active throughout all transformation stages.

| Network Component | Primary Function | Identity Preservation Role |

|---|---|---|

| Identity-Encoding Layers | Extract unique facial characteristics | Create mathematical constraints |

| Style Application Layers | Apply Pixar aesthetic | Respect identity constraints |

| Multi-Scale Processing | Handle various detail levels | Maintain consistency across scales |

The AI processes your photo through multiple network layers, with some layers specifically tasked with extracting and preserving identity features while others handle stylistic transformations.

The identity-encoding pathway extracts features at multiple scales, capturing both overall proportions of your face and fine details that contribute to recognition:

- Broad facial structure

- Mid-level features like nose shape and mouth width

- Fine details like eyebrow texture and skin tone

Superiority Over Template Morphing

Threedium's approach offers superior identity preservation compared to template-based avatar systems that morph a predefined cartoon face model toward your photo through parameter adjustment.

Template systems begin with a generic Pixar-style character and modify sliders controlling:

- Eye size

- Nose width

- Mouth shape

- Other features

You lose identity fidelity through this approach because the template's underlying structure constrains which facial configurations the system can represent.

Threedium's mesh construction approach builds your character's topology from your actual facial structure rather than deforming a generic template, allowing the system to represent any facial configuration present in your photo without geometric constraints.

Template-based systems struggle with feature combinations that fall outside their parameter ranges:

- Narrow face with wide-set eyes

- Small nose with full lips

- Unusual or distinctive feature combinations

Color and Material Fidelity

Your identity remains recognizable because the texture application stage maintains skin tone, hair color, other identifying characteristics from your photo while translating them into Pixar's stylized rendering aesthetic.

The AI analyzes your photo to extract your actual coloring as RGB values and material properties, then applies Pixar's characteristic color saturation boost and simplified shading model to these extracted values rather than replacing them with generic cartoon colors.

You receive a character with your:

- Brown eyes

- Auburn hair

- Medium skin tone

These are rendered in Pixar's glossy, vibrant style rather than a generic character with arbitrary coloring that bears no relationship to your appearance.

This color preservation proves crucial for identity recognition because humans rely heavily on coloring cues to identify faces, especially when viewing stylized representations that simplify geometric details.

The material property translation process converts photographic surface appearance into Pixar's physically-based rendering model while maintaining relative differences that distinguish your coloring from others. The system applies consistent color transformations that maintain these relative relationships, ensuring your Pixar-style character exhibits the same color harmony as your photo even though absolute color values shift toward more intense, stylized hues characteristic of computer-animated films.