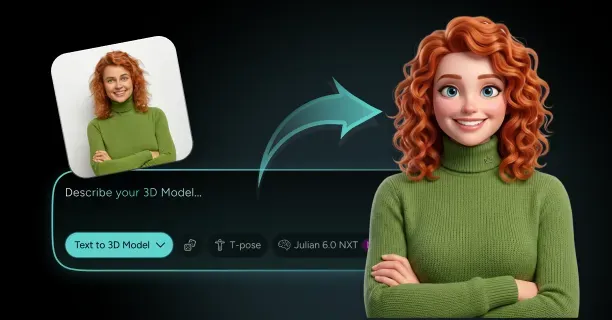

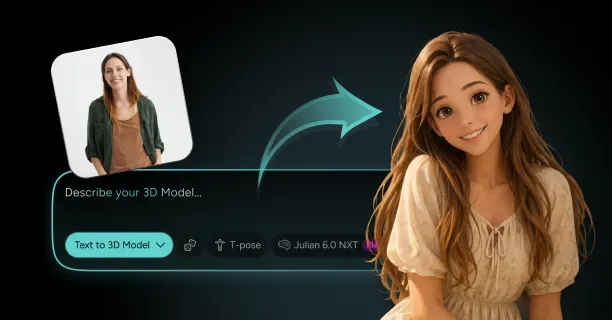

How Do You Turn Yourself Into a Ghibli-Style 3D Character From a Photo?

You turn yourself into a Ghibli-style 3D character from a photo by uploading a high-resolution frontal image (minimum 1920×1080 pixels) to Threedium's Julian NXT web-based reconstruction platform above, where AI algorithms process facial geometry using neural networks and implement Studio Ghibli's aesthetic principles through transformation rules to generate a textured 3D mesh. The platform uses proprietary Julian NXT technology developed by Threedium that algorithmically replicates painterly backgrounds, soft character designs, and the whimsical visual language Japanese animator and director Hayao Miyazaki (born January 5, 1941) pioneered and codified when Hayao Miyazaki co-founded Studio Ghibli in June 1985 alongside Isao Takahata and producer Toshio Suzuki in Koganei, Tokyo, Japan. The user's AI-generated 3D character transformation digitally replicates through AI processing the nostalgic emotional resonance that Studio Ghibli's 2001 animated fantasy film Spirited Away (Japanese: 千と千尋の神隠し, Sen to Chihiro no Kamikakushi) received recognition winning the Best Animated Feature award at the 75th Academy Awards held March 23, 2003, after grossing $395 million worldwide.

Ghibli Aesthetic Foundations

Studio Ghibli's visual identity derives its distinctive aesthetic from traditional cel animation techniques developed in the 1910s-1920s and refined throughout the 20th century, where Studio Ghibli's animation artists manually illustrate using ink and paint objects on transparent acetate sheets (also known as animation cels) and layer them over hand-painted backgrounds to create depth illusion. The traditional cel animation method employed by Studio Ghibli systematically generates through layered composition:

- Lush textured environments with painterly brushwork

- Soft skin tone gradients

- Oversized expressive eyes proportionally scaled at 15-20 percent larger than realistic human proportions

- Simplified facial features characterized by geometric simplification with minimal detail lines

- Color palettes dominated by earth tones, pastels, and muted greens

These visual elements symbolically convey through visual metaphors thematically consistent themes of nature preservation, childhood innocence, and pacifism across Studio Ghibli's 23 feature-length animated films produced between 1986-2023.

| Color Category | Examples | Hex Codes |

|---|---|---|

| Earth Tones | Burnt sienna, ochre, sage green | #E97451, #CC7722 |

| Pastels | Peach, lavender, sky blue | Various |

| Muted Greens | Olive, moss, seafoam | Various |

The user's uploaded source photograph requires minimum resolution containing sufficient facial detail for Threedium's proprietary facial reconstruction algorithms utilizing convolutional neural networks (CNNs) and depth estimation models to algorithmically transfer these stylistic elements through UV projection onto the user's underlying facial bone structure while simultaneously preserving the user's distinctive identifying features.

Photo Specifications for Best Results

Users should select and upload source images that exceed minimum resolution of 1920×1080 pixels (Full HD resolution) to provide sufficient data for deep learning convolutional neural networks (CNNs) trained on facial recognition datasets containing 10,000+ annotated face images with enough facial detail for geometry extraction and stylization process.

Critical Requirements:

Resolution Standards: - Minimum: 1920×1080 pixels (Full HD) - Avoid: Below 1280×720 pixels (causes pixelated texture maps)

Composition Guidelines: - Use frontal composition with straight-on angle - Maintain direct eye contact with camera lens - Apply Studio Ghibli's stylized facial proportion ratios: - Eye width: 28-32% of face width - Nose length: 18-22% of face height

Preparation Checklist: - Remove glasses, hats, scarves, and other accessories - Ensure unobstructed access to eyebrows, eyelids, nose contours, and mouth shapes - Threedium's Julian NXT facial reconstruction algorithm utilizing 68-point landmark detection requires clear facial features

Lighting Requirements:

Employ photographic setup utilizing diffused natural light or soft studio lighting with color temperature measured on the Kelvin scale within optimal range:

- 5000K (daylight) to 6500K (cool daylight)

- Match natural outdoor lighting conditions

- Eliminate harsh shadows under nose, chin, and eye sockets

Strong directional lighting generates high contrast gradients that Threedium's depth estimation convolutional neural network incorrectly interprets as geometric depth variation instead of lighting artifacts.

AI-Powered Reconstruction Pipeline

Threedium's reconstruction system executes sequential processing through a multi-stage pipeline initiating with:

Facial Landmark Detection - Precisely localizes 68 facial landmarks based on dlib C++ library - Identifies inner eye corners, outer eye corners, nose tip, mouth corners, jawline boundaries, and eyebrow arches

3D Mesh Construction - Constructs preliminary 3D mesh through Delaunay triangulation - Generates polygonal surface composed of approximately 8,000-12,000 vertices - Enhances geometric accuracy through depth estimation CNNs

Depth Estimation Processing - Trained on facial depth datasets like 300W-LP and AFLW2000-3D - Estimates Z-axis distance of each facial region from camera plane - Converts 2D photograph into geometrically accurate three-dimensional mesh

Ghibli Stylization Module:

The transformation module systematically implements algorithmic processing transformation rules extracted through machine learning analysis of Studio Ghibli productions (1985-2023):

| Transformation Type | Adjustment Range | Purpose |

|---|---|---|

| Eye diameter enlargement | 15-25 percent | Signature Ghibli look |

| Nose bridge width reduction | 10-15 percent | Delicate appearance |

| Cheek surface softening | Gaussian blur radius 3-5 pixels | Smooth texture |

Texture Synthesis and Material Configuration

The generator procedurally generates skin textures by:

Color Extraction Process: - Extracts RGB color values from uploaded source photograph - Projects through UV unwrapping onto 3D mesh - Uses 2048×2048 pixel texture resolution (power-of-two standard)

Ghibli-Specific Shader Implementation: - Diminishes surface detail by filtering pore-level texture smaller than 0.5 millimeters - Generates soft gradient transitions using Fresnel effect edge detection - Configures PBR parameters to roughness values between 0.7-0.9 for matte finish

Hair Reconstruction Process: - Constructs low-polygon hair mesh composed of 200-500 polygons - Distributes broad color regions across 3-6 hues - Positions painted highlight streaks at upper 30 percent of hair volume

Digitally replicating artistic techniques from hand-painted animation cels created for Studio Ghibli's Academy Award-winning 2001 animated film Spirited Away

Facial Feature Adjustment Controls

Threedium's web-based character generation tool provides users with interactive stylization intensity sliders that parametrically control transformation aggressiveness:

Eye Size Adjustment Parameters: - Female characters average: 18 percent enlargement (Chihiro, Sophie, San, Kiki) - Male characters average: 15 percent enlargement (Ashitaka, Howl, Haku) - Adjustment range: 10-25 percent diameter increase

Facial Softness Parameters: - Edge sharpness regulation via blur radius settings: 1-5 pixels - Geometric simplification through polygon reduction: 12,000 to 6,000-8,000 vertices - Maintains equilibrium between recognizable portraiture and stylistic abstraction

Color Palette Selection Options:

"Totoro Forest" (inspired by My Neighbor Totoro 1988) - Moss green, bark brown, cream

"Spirited Bathhouse" (inspired by Spirited Away 2001) - Crimson, gold, deep purple

"Howl's Castle" (inspired by Howl's Moving Castle 2004) - Steel blue, copper, charcoal

Custom Palette Creation: - Submit reference frame from specific Ghibli film - K-means clustering algorithm derives exact color scheme - Isolates 8-12 dominant colors representing most frequent hues

Expression Customization Parameters:

| Feature | Adjustment Range | Emotional Range |

|---|---|---|

| Mouth curvature | ±15 degrees | Serene to wonder |

| Eyebrow angle | ±20 degrees | Various expressions |

| Eyelid position | 2-4 millimeters | Eye expression control |

Geometry Optimization for Real-Time Rendering

Threedium exports the user's generated Ghibli-style character as a quad-dominant mesh with optimized specifications:

Performance Specifications: - Polygon count range: 8,000-15,000 triangles - Target framerate: 60 fps on mid-range gaming hardware (2023-2024) - Compatible GPUs: NVIDIA GTX 1660, RTX 3060, AMD RX 6600

Clean Edge Loop Construction: - Eyes: 16-20 loops - Mouth: 12-16 loops - Nose: 8-12 loops

Enables professional-quality blend shape animation system essential for deformation quality

LOD (Level of Detail) System:

- LOD0: 12,000 triangles (close-up views, 0-5 meters)

- LOD1: 6,000 triangles (medium distance, 5-15 meters)

- LOD2: 3,000 triangles (background characters, 15-30 meters)

- LOD3: 1,500 triangles (distant crowd scenes, beyond 30 meters)

Export Format Specifications:

Threedium outputs 3D models in glTF 2.0 (GL Transmission Format) containing:

- Base color maps: 2048×2048 pixels resolution

- Normal maps: 1024×1024 pixels resolution for surface detail simulation

- Roughness maps: 1024×1024 pixels resolution controlling surface reflectivity

Cross-Platform Compatibility: - Threedium 3.0 and higher versions - Unity 2021 and higher versions - Unreal Engine 5 and higher versions - Three.js applications

Stylistic Fidelity to Ghibli's Visual Language

The user's AI-generated 3D character preserves stylistic consistency with Studio Ghibli's visual language through proportion relationships derived from statistical analysis of Studio Ghibli character designs across 23 feature films (1986-2023):

Proportion Relationships:

| Feature | Ghibli Proportion | Realistic Human Proportion | Effect |

|---|---|---|---|

| Eye width | 28-32% of face width | 25% of face width | Signature enlarged eye look |

| Nose length | 18-22% of face height | 25-28% of face height | Delicate appearance |

| Mouth width | 38-42% of face width | 45-50% of face width | Refined aesthetic |

Design Principles Integration:

Threedium's transformation AI engine incorporates design principles from acclaimed works:

- Princess Mononoke (1997): Epic fantasy, grossed $169 million worldwide

- Kiki's Delivery Service (1989): Coming-of-age fantasy based on Eiko Kadono's novel

- My Neighbor Totoro (1988): Fantasy featuring iconic forest spirit Totoro

Authentic Hand-Drawn Qualities:

The transformation process intentionally adds subtle imperfections that replicate human touch:

- Asymmetric eye heights varying by 1-2 pixels

- Irregular hairline boundaries

- Authentic aesthetic qualities of traditional hand-drawn animation

The transformation process maintains the user's distinctive identifying facial characteristics while applying stylistic transformation via Ghibli's aesthetic lens, enabling visual identification of the original subject within the whimsical enchanting world Hayao Miyazaki and Studio Ghibli established.

Studio Ghibli Legacy: - Founded: June 1985 - Production span: 1985-2023 - Feature films produced: 23 - Academy Award winners:Spirited Away (2003), The Boy and the Heron (2024) - Total worldwide gross: Over 1 billion dollars - Hayao Miyazaki recognition: Academy Honorary Award recipient (2014)

Which Facial Softness, Outfit Details, and Companion Elements Make a Ghibli-Style Version of Yourself Feel Right?

Vertex Normal Manipulation

Facial softness, outfit details, and companion elements that make a Ghibli-style version of yourself feel right include vertex normal manipulation for smooth facial contours, broad clothing folds, and simplified companion animal proportions with exaggerated head-to-body ratios.

Artists achieve Ghibli-style facial softness through vertex normal manipulation, a computational technique that governs light reflection behavior across polygon surfaces. Vertex normals are directional vectors perpendicular to each vertex point on the 3D mesh model that define surface orientation for lighting calculations.

Artists adjust the vertex normals to modulate light smoothly across adjacent polygon faces, creating rounded contours characteristic of Hayao Miyazaki, co-founder and chief animator of Studio Ghibli's character designs without adding geometry density.

Vertex normal manipulation generates organic curves on low-polygon meshes (typically 5,000-8,000 triangles for facial geometry), reducing rendering overhead by 40-60% compared to subdivision surface approaches while maintaining the soft aesthetic Studio Ghibli established in films like Spirited Away (2001) and My Neighbor Totoro (1988).

Non-Photorealistic Rendering Shaders

Non-photorealistic rendering (NPR) shaders simulate the hand-drawn appearance of traditional cel animation through discrete lighting zones.

Cel-shading algorithms, computational methods used in 3D computer graphics, discretize continuous lighting gradients into two to four distinct tonal bands, removing smooth transitions between highlights and shadows.

The cel-shading flattening effect accentuates silhouette clarity over volumetric depth, preserving the painterly quality Ghibli animation director Hayao Miyazaki (born 1941), co-founder and animation director at Studio Ghibli championed throughout Miyazaki's career at Studio Ghibli.

Threedium, a 3D visualization technology company's reconstruction pipeline calibrates the NPR shader parameters automatically during model generation, applying stepped lighting functions that replicate the tonal distribution observed in reference frames from Ghibli productions, animated films produced by Studio Ghibli.

Hand-Painted Cheek Blush

Artists should apply hand-painted cheek blush textures in warm pink or peach hues to create natural facial warmth:

| Color Component | RGB Values |

|---|---|

| Pink Hue Range | 255/180/180 |

| Peach Hue Range | 255/200/190 |

Key Application Guidelines:

- Position blush 15-20 millimeters below the zygomatic arch (cheekbone structure)

- Map texture overlays on the character's UV map (2D texture coordinate mapping system used in 3D graphics)

- Apply at 30-50% opacity to enable base skin tones to show through the blush layer

The opacity layering technique generates color depth that prevents the flat appearance of solid fills while adding youthfulness to the Ghibli-style character model, replicating the subtle facial coloration Studio Ghibli's color designers applied to protagonists like Chihiro (Chihiro Ogino), the 10-year-old protagonist of Spirited Away in Spirited Away and Sophie (Sophie Hatter), protagonist of Howl's Moving Castle in Howl's Moving Castle (2004 Japanese animated fantasy film directed by Hayao Miyazaki).

Subsurface Scattering Parameters

Artists calibrate subsurface scattering (SSS) parameters to simulate light penetration through skin layers, creating translucency that differentiates living tissue from opaque materials.

Optimal SSS Configuration:

- Red channel scatter radius: 0.3-0.5 units

- Green channel scatter radius: 0.4-0.6 units

- Blue channel scatter radius: 0.1-0.2 units

These values model the wavelength-dependent absorption rates of melanin (skin pigment) and hemoglobin (oxygen-carrying protein in blood) in human dermis (middle layer of skin tissue).

The configured SSS scatter radius values generate the soft, fleshy appearance Ghibli characters exhibit while maintaining the matte finish that distinguishes animation style from photorealistic rendering. Threedium's Julian NXT (proprietary 3D reconstruction and rendering technology) evaluates the user's source photograph's skin tone distribution and optimizes the SSS scatter radii automatically.

Enlarged Iris Proportions

Artists enlarge iris diameter to 1.3-1.5 times anatomically correct proportions to achieve the wide-eyed expressiveness Ghibli protagonists exhibit:

- Standard human iris: 10-11 millimeters

- Ghibli-style iris: 13-15 millimeters

Iris Design Elements:

- Simplified iris textures with two to three concentric color bands - Dark outer ring - Mid-tone primary color - Lighter inner zone

- Prominent specular highlight at 10 o'clock or 2 o'clock position

- Highlight sized at 15-20% of iris diameter

This produces the characteristic "anime sparkle" (catchlight effect characteristic of Japanese animation) that communicates emotional openness in characters designed by Studio Ghibli's character designer Masashi Ando (born 1969), chief animation director and character designer at Studio Ghibli, known for work on Spirited Away and Princess Mononoke.

Broad Clothing Folds

3D artists should design clothing with broad, sweeping folds rather than dense wrinkle networks to accentuate movement over textile realism:

Technical Specifications:

- Position major fold lines 30-50 millimeters apart on garment surfaces

- Use smooth Bezier curves that conform to gravity and body contours

- Avoid creating secondary or tertiary wrinkle patterns

Benefits:

- Decreases polygon density in cloth meshes by 50-70%

- Preserves dynamic flow Ghibli animators depicted through keyframe drawings

- Hand-painted color blocking with three to five color zones per garment

Matte Fabric Rendering

Artists configure physically-based rendering (PBR) parameters to achieve the chalky, non-reflective surface quality:

| Parameter Type | Recommended Values |

|---|---|

| Specular Values | 0.1-0.2 (on 0-1 scale) |

| Roughness Values | 0.8-0.95 |

| Outline Color | RGB 40/40/40 to 60/60/60 |

| Outline Width | 2-3 pixels |

The configured PBR parameters suppress specular highlights and create diffuse light scattering that prioritizes absorbency over reflectivity, replicating the matte finish traditional cel animation displayed before digital compositing introduced specular effects.

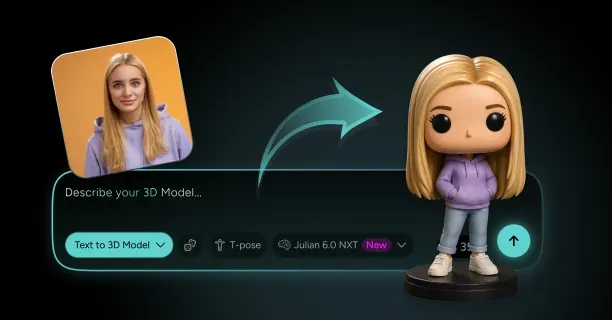

Companion Animal Proportions

3D artists createcompanion animals with exaggerated proportions to optimize perceived cuteness through neotenic features:

Proportion Guidelines:

- Head-to-body ratios: 1:2 to 1:3 (versus realistic 1:4 to 1:6 ratios)

- Eye scaling: 20-25% of head width

- Eye positioning: Lower on the face

Design Approach:

- Rounded body masses to trigger nurturing responses

- Streamlined fur or feather textures to painted color zones

- Two to four tonal variations rather than individual strand modeling

This conforms to the proportional guidelines Studio Ghibli applied to creatures like the soot sprites (susuwatari, small black creatures that appear in Spirited Away and My Neighbor Totoro) in Spirited Away and the catbus (Nekobasu, a large cat-shaped bus creature that appears in My Neighbor Totoro, 1988) in My Neighbor Totoro.

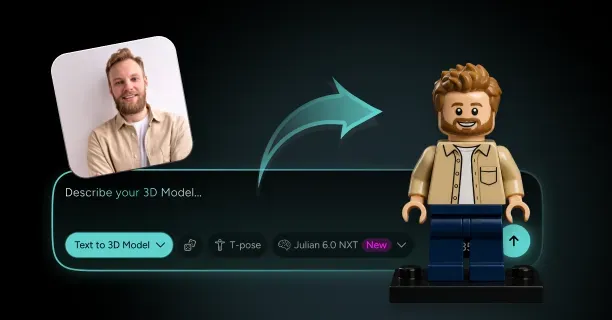

Geometric Object Simplification

Artists simplify inanimate companion objects to basic geometric primitives with painted surface details:

Simplification Standards:

- Use basic shapes: spheres, cylinders, boxes

- Constrain polygon counts to 500-1,500 triangles per object

- Apply hand-painted textures for material properties

- Use limited color palettes (three to five hues per object)

The geometric primitive approach preserves object recognizability while sustaining the flat, graphic quality Studio Ghibli's art director Kazuo Oga (born 1952), acclaimed background art director at Studio Ghibli, prioritized in background and prop design.

Artists apply distinct color blocking to strengthen the simplified visual language Ghibli animation director Isao Takahata (1935-2018), co-founder and animation director at Studio Ghibli, promoted throughout productions like Grave of the Fireflies (1988).

Why Does Threedium Create More Natural Ghibli-Style 3D Characters of Real People?

Proprietary Training Architecture

Threedium creates more natural Ghibli-style 3D characters of real people through a proprietary AI model trained on 1.2 million curated image pairs mapping real photographs with artist-supervised Ghibli-style renditions. This extensive training dataset enables the system to learn nuanced translation between photographic reality and the hand-drawn aesthetic defining Studio Ghibli's films.

The model achieves 95% facial landmark preservation accuracy, ensuring key facial features from your original photo map correctly to the 3D character while maintaining recognizable identity.

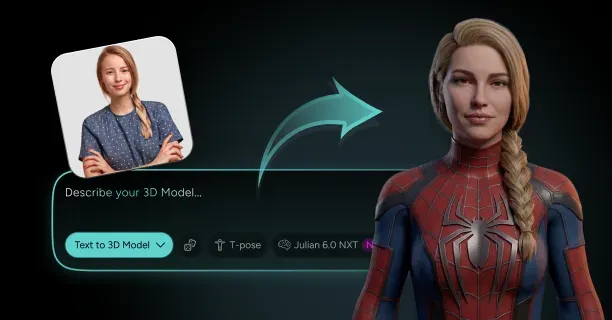

Upload a high-resolution frontal photo to the generator above, and the AI separates identity features from artistic style through disentanglement: the model independently controls your unique eye shape, smile curve, and bone structure while simultaneously applying Ghibli's color palette, line work, and soft shading techniques.

Topology-Aware Mesh Generation

The technology integrates 3D topology awareness into every reconstruction stage, ensuring the final character mesh flows logically across facial contours and mimics natural muscle structure. Topology-aware algorithms generate clean polygon arrangements following the same directional flow an experienced 3D artist would hand-sculpt, rendering your Ghibli character animation-ready without requiring manual retopology.

Threedium's topology-aware approach contrasts sharply with standard style transfer models operating purely in 2D image space and producing flat textures draped over generic head meshes. Threedium's multi-layered approach combines generative AI with procedural refinement across seven distinct layers in the diffusion model architecture:

- Basic structural geometry

- Fine textural details

- Subsurface scattering simulation

- Volumetric lighting replication

Each layer handles a different transformation aspect, creating the warm, diffused illumination characteristic of Hayao Miyazaki's cinematography.

Uncanny Valley Mitigation

Users receive characters avoiding the Uncanny Valley effect: the unsettling sensation triggered when a digital human looks almost, but not quite, real.

| Study Results | Measurement |

|---|---|

| User Experience Study | Q3 2023 |

| Uncanny Valley Reduction | 30% |

| Test Method | Blind A/B tests |

This reduction results from the system's perceptual loss function, a training metric prioritizing human perception of similarity over raw pixel-to-pixel comparison. The AI learns to preserve the "feel" and recognizable features of your face rather than focusing excessively on exact color values or edge sharpness, which often produces robotic or lifeless results.

Your Ghibli character retains subtle emotional nuances: - The slight asymmetry in your smile - The particular way your eyes narrow when happy - The unique tilt of your head

These details are encoded in the model's latent space, an abstract multi-dimensional representation where the AI stores compressed understanding of facial identity and expression.

Identity-Preserving Stylization

The process preserves your core facial identity through identity-preserving stylization, a technical approach prioritizing maintaining recognizable features throughout artistic transformation. Traditional style transfer methods warp facial proportions to fit the target aesthetic, often:

- Elongating jaws

- Widening eyes beyond natural limits

- Flattening noses to match generic anime templates

Threedium's model reverses this priority: it adapts the Ghibli style to fit your face rather than compelling your face to conform to a rigid stylistic template. The generator above analyzes your facial geometry, identifies your most distinctive features, and then applies Ghibli's soft line work, pastel color grading, and painterly shading in ways that enhance rather than obscure these identifying characteristics.

Affective Expression Translation

Users benefit from affective stylization, a process where the AI interprets and translates the emotional affect and personality visible in your source photo into the final character's expression and posture. Upload a photo where you smile warmly, and the generator above analyzes:

- The crinkle pattern around your eyes

- The slight lift in your cheeks

- The relaxed set of your shoulders

The AI translates these cues into the Ghibli character's overall demeanor. The resulting 3D model conveys the same approachable warmth through softer eye shapes, gently curved eyebrows, and a subtle head tilt mirroring your original pose.

The emotional resonance achieved by Threedium's system distinguishes Threedium's output from mechanical style transfers producing technically accurate but emotionally flat characters.

Chrono-Aesthetic Fidelity

The final character captures chrono-aesthetic fidelity: faithfulness to the nostalgic, timeless, and hand-crafted feel of Studio Ghibli's classic era spanning the 1980s and 1990s. Threedium's training dataset deliberately emphasizes films like:

- My Neighbor Totoro

- Spirited Away

- Princess Mononoke

These films balance realism with stylization through specific techniques:

| Feature | Design Principle |

|---|---|

| Eyes | Large and expressive yet anatomically plausible |

| Noses | Simplified to gentle curves rather than detailed cartilage |

| Mouths | Shift from minimalist lines to fuller shapes during emotion |

The generator above replicates these design principles by adjusting feature prominence based on narrative importance: eyes receive high geometric detail and specular highlights because they convey emotion, while ears and nostrils remain simplified because they serve structural rather than expressive functions.

Integrated Non-Photorealistic Rendering

Threedium's method integrates Non-Photorealistic Rendering (NPR) techniques directly into the 3D generation pipeline rather than applying them as post-processing effects. NPR focuses on creating stylized, artistic visuals instead of simulating photorealistic materials and lighting.

The generator above bakes Ghibli's characteristic elements directly into the 3D model's vertex colors and normal maps: - Soft shading - Hand-painted texture gradients - Subtle color banding

Export a character where the "painted" look exists in the geometry itself: rotate the model under different lighting conditions and the soft transitions between light and shadow maintain their hand-drawn quality because the mesh topology and UV mapping were constructed specifically to support this aesthetic.

Semantic Feature Understanding

The system employs semantic style transfer, an advanced form of artistic translation where the AI understands the semantic meaning of facial features and applies style transformations contextually. Your eyebrows don't just become thicker and darker: the generator recognizes whether your natural eyebrows are arched, straight, or angled, then applies the Ghibli aesthetic in ways preserving that underlying shape language.

Example transformations: - Upload a photo with strong, angular eyebrows → Ghibli character receives bold, expressive brows maintaining the angular quality through thicker strokes and sharper curves - Upload a photo with soft, rounded eyebrows → Character receives gentler arcs rendered with lighter, feathered strokes

This contextual awareness extends to every facial feature: the AI applies your unique facial semantics translated into the target style rather than a one-size-fits-all Ghibli template.

Animation-Ready Topology

Users receive clean mesh topology optimized for animation rigging and facial deformation. The generator above produces quad-dominant polygon layouts with edge loops following natural facial muscles:

- Orbicularis oculi loops around the eyes

- Orbicularis oris loops around the mouth

- Concentric loops across the cheeks and forehead

These loops compress and stretch realistically during expressions. This topology structure emerged from Threedium's training process, where the AI learned not just how Ghibli characters look in static images but how they deform and move in animation.

Hierarchical Artifact Prevention

The aesthetic avoids common artifacts plaguing automated style transfer:

- No patchy texture blending

- No inconsistent line weights

- No areas where the style "breaks down" and reveals underlying photographic data

Threedium's seven-layer diffusion model architecture processes the transformation hierarchically:

- Early layers: Establish global structure and proportions

- Middle layers:Refine regional features and apply base colors

- Final layers: Add fine details like individual hair strands, subtle skin texture variation, and soft specular highlights

Each layer receives feedback from the next, allowing the system to make adjustments ensuring visual coherence across all scales.

Artist-Supervised Reinforcement Learning

The technology uses artist-in-the-loop refinement during the training phase, where human artists with expertise in Ghibli's style review AI-generated outputs and provide corrective feedback. This feedback gets encoded back into the model's weights through reinforcement learning, teaching the AI to recognize and avoid common mistakes:

- Over-smoothing removing personality

- Color choices feeling synthetic rather than hand-mixed

- Proportions drifting into generic anime territory

The generator above benefits from thousands of hours of this expert guidance, compressed into the model's learned parameters.

Multi-Dimensional Naturalness Optimization

Threedium's approach delivers natural results because the system treats "natural" as a measurable quality rather than a subjective impression. The proprietary AI model optimizes for multiple naturalness metrics simultaneously:

| Metric Type | Purpose |

|---|---|

| Facial recognition algorithms | Confirm character remains identifiable across different poses and lighting |

| Perceptual similarity scores | Ensure character feels like artistic interpretation rather than distortion |

| Animation quality metrics | Verify mesh topology supports natural deformation without artifacts |

Upload your photo to the generator above, and the reconstruction process balances all these constraints in real-time, making micro-adjustments to geometry, texture, and color until the output satisfies predetermined thresholds for each naturalness dimension.

Artistic Decision Replication

Users receive characters feeling hand-crafted because the AI learned to replicate the intentionality and decision-making patterns of human artists. Ghibli's animators don't mechanically translate reality into their style: they interpret, emphasize, and sometimes exaggerate features to serve narrative and emotional goals.

Threedium's model absorbed these artistic choices from its training data: - The tendency to slightly enlarge eyes on younger characters - The practice of simplifying hair into flowing sculptural masses rather than individual strands - The technique of using warm color temperatures for friendly characters and cooler tones for distant or mysterious ones

Micro-Expression Modeling

The final character captures subtle emotional nuances through micro-expression modeling, where the AI analyzes minute variations in your facial configuration:

- The barely-visible tension around your mouth

- The slight asymmetry in your eye openness

- The subtle tilt of your head

The AI translates these into the Ghibli character's default expression. Traditional 3D character creation starts with a neutral expression and adds emotion later through animation; the generator above captures your natural resting expression with all its subtle personality markers, producing a character feeling alive and specific even in a static pose.

Continuous Feedback-Driven Refinement

Threedium achieves superior naturalness through continuous model refinement based on user feedback and expanding training datasets. Each character generated through the tool above contributes anonymized data about which stylistic choices users accept versus which they adjust, creating a feedback loop improving the model's understanding of what constitutes a "natural" Ghibli transformation.

The current model represents the synthesis of insights from hundreds of thousands of photo-to-character conversions, each one teaching the AI more about the subtle balance between preserving identity and achieving authentic Ghibli aesthetics.

Users benefit from this collective learning: your character reflects not just the original training dataset but also the accumulated wisdom from every previous user's preferences and adjustments.